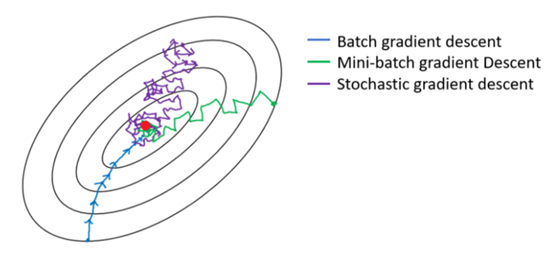

Batch vs Stochastic vs Mini-Batch Gradient Descent

Batch gradient descent (batch size = ) produces low-noise gradient estimates and takes large, reliable steps toward the minimum. However, it may require considerable time per iteration and significant additional memory.

Stochastic gradient descent (batch size = ) is memory-efficient and well-suited for large datasets. However, it is extremely noisy because individual training examples may point in poor directions. SGD tends to oscillate and wander around the region of the minimum rather than converging directly to it.

Minibatch gradient descent (batch size between and ) offers a practical compromise. Although it does not guarantee monotonic progress toward the minimum, it tends to head more consistently in the right direction.

Experimentally, while SGD converges faster than batch GD in terms of the number of examples processed, it consumes more wall-clock time because computing the gradient example by example is computationally less efficient. Minibatch SGD balances convergence speed and computation efficiency: for instance, a batch size of can even outperform full-batch GD in runtime.

0

2

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Related

An Example of Mini-Batches

Example Using Mini-Batch Gradient Descent (Learning Rate Decay)

Which of these statements about mini-batch gradient descent do you agree with?

Why is the best mini-batch size usually not 1 and not m, but instead something in-between?

Suppose your learning algorithm’s cost J, plotted as a function of the number of iterations, looks like the image below:

Stochastic Gradient Descent Algorithm

Loss Gradient over a Mini-batch

Minibatch Size Selection Trade-off

Batch vs Stochastic vs Mini-Batch Gradient Descent

Mini-Batch Gradient Descent Algorithm

Derivation of the Gradient Descent Formula

Mini-Batch Gradient Descent

Epoch in Gradient Descent

Gradient Descent with Momentum

For logistic regression, the gradient is given by ∂∂θjJ(θ)=1m∑mi=1(hθ(x(i))−y(i))x(i)j. Which of these is a correct gradient descent update for logistic regression with a learning rate of α?

Suppose you have the following training set, and fit a logistic regression classifier .

Backpropagation

Batch vs Stochastic vs Mini-Batch Gradient Descent

Logistic Regression Gradient Descent Derivation

Adam vs. SGD vs. RMSProp vs. SWA vs. AdaTune

Finite Sample Distribution for Stochastic Gradient Descent

Lack of Optimality Guarantees in Nonconvex Optimization

SGD Optimizer From-Scratch Implementation

Batch vs Stochastic vs Mini-Batch Gradient Descent