Learn Before

Comparison of Context Usage in Causal vs. Masked Language Modeling

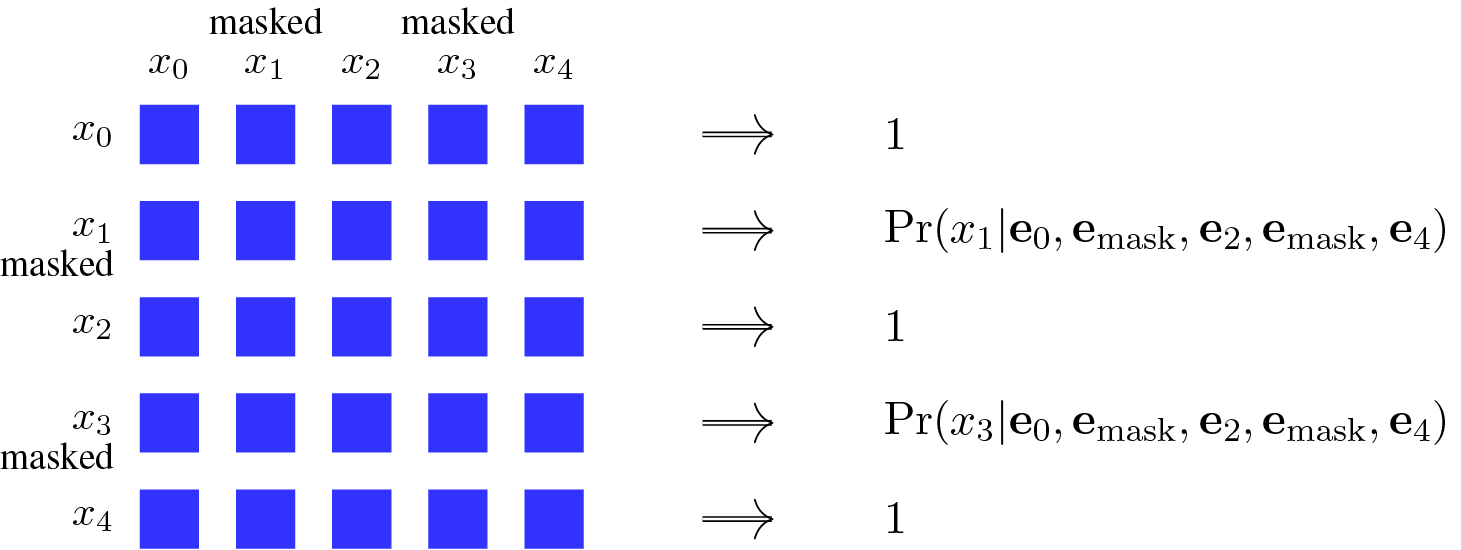

Causal Language Modeling (CLM) and Masked Language Modeling (MLM) differ fundamentally in how they use context for token prediction. CLM is unidirectional, meaning it only uses the left-context (preceding tokens) to predict the token at a given position. In contrast, MLM is bidirectional, as it utilizes all unmasked tokens—both to the left and right of a masked token—to make its prediction. This bidirectional approach allows MLM-based models to build a more comprehensive contextual understanding of language.

0

1

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Output Variation in Sequence Models

Role of the [CLS] Token in Sequence Classification

Masked Language Modeling

Input Formatting with Separator Tokens

Standard Auto-Regressive Probability Factorization using Embeddings

CLS Token as a Start Symbol in Encoder Pre-training

Comparison of Context Usage in Causal vs. Masked Language Modeling

Applying the General Sequence Model Formulation

In the general formulation of a sequence model,

o = g(x_0, x_1, ..., x_m; θ), which statement best analyzes the distinct roles of the components?Match each symbol from the general sequence model formulation,

o = g(x_0, x_1, ..., x_m; θ), with its correct description.Fundamental Issues in Sequence Model Formulation

Neural Network as a Parameterized Function

Learn After

Causal Language Modeling as a Special Case of Masked Language Modeling

Example of Masked Language Modeling Prediction

Consider two different approaches for training a language model to predict a specific word within a sentence.

Approach 1: The model is trained to predict the next word in a sequence, using only the words that have appeared before it.

Approach 2: The model is trained to predict a word that has been intentionally hidden, using all the other visible words in the sentence, both those that come before and after the hidden word.

If both models are tasked with predicting the word 'jumps' in the sentence 'The quick brown fox jumps over the lazy dog', which statement correctly analyzes the contextual information available to each model for this specific task?

Choosing the Right Contextual Approach for Language Tasks

Match each description of a language model's prediction task or characteristic to the type of contextual information it utilizes.