Learn Before

GELU (Gaussian Error Linear Unit) Formula

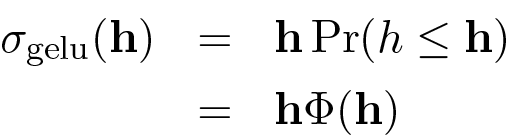

The Gaussian Error Linear Unit (GeLU) function is defined by the formula:

Here, is the input vector, and represents a -dimensional vector with entries drawn from the standard normal distribution . The informal notation refers to a vector where each entry represents the percentile for the corresponding entry of , which is mathematically equivalent to applying the cumulative distribution function .

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

GELU (Gaussian Error Linear Unit) Formula

Applications of GELU in Large Language Models

An activation function is defined by its behavior of weighting an input value by that value's corresponding cumulative probability from a standard normal distribution (mean=0, variance=1). Given two inputs,

x = -3andy = 3, which statement best describes their respective outputs,f(x)andf(y)?Hendrycks and Gimpel [2016] on GELU

An activation function is designed to scale its input value by the probability that a randomly drawn value from a standard normal distribution (mean=0, variance=1) is less than or equal to that input. How does this function's output for a small negative input (e.g., -0.1) compare to the output of a function that simply sets all negative inputs to zero?

Activation Function Selection for a Language Model

Diagnosing Training Instability When Changing Normalization and FFN Activations

Choosing an FFN Activation and Normalization Pair Under Deployment Constraints

Explaining a Distribution Shift Caused by Swapping LayerNorm for RMSNorm and GELU for SwiGLU

Root-Cause Analysis of FFN Output Drift After Swapping Normalization and Activation

Selecting a Normalization + FFN Activation Change After Quantization Regressions

Interpreting Activation/Normalization Interactions from FFN Telemetry

You are reviewing a teammate’s proposed Transforme...

In a transformer feed-forward block, your team is ...

You’re debugging a transformer FFN refactor where ...

You’re reviewing a PR that changes a transformer b...

Learn After

A language model is tasked with improving a Chinese-to-English translation. The desired process is for the model to first explicitly identify any errors in an initial translation and then generate a corrected version based on that analysis. Which of the following prompt structures correctly instructs the model to perform this specific two-step task?

The Gaussian Error Linear Unit (GELU) activation function is defined as , where represents the cumulative distribution function (CDF) of the standard normal distribution (a bell curve centered at zero). Based on this formula, what is the output of the function for an input value of ?

The GELU activation function is defined as , where is the cumulative distribution function (CDF) of the standard normal distribution. Based on the properties of the CDF, how does the output of the GELU function behave for a very large negative input (i.e., ) versus a very large positive input (i.e., )?