Learn Before

BERT Input Representation: Single and Paired Sentences

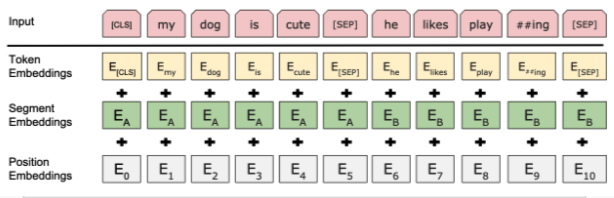

BERT models are built to represent either individual sentences or pairs of sentences, equipping them to tackle a variety of downstream language understanding problems. When a task requires handling two sentences simultaneously, the input is arranged as a single combined sequence containing both sentences, typically denoted as and .

0

0

Contributors are:

Who are from:

References

BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Tags

What is BERT?

Data Science

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Learn After

Next Sentence Prediction (NSP)

A language representation model is designed with the flexibility to process either a single piece of text or a pair of texts as its input, allowing it to be adapted for a wide variety of tasks. Which of the following tasks would most directly benefit from the model's ability to process a pair of texts?

BERT Input Format for Sentence Pairs

Choosing Input Formats for Language Tasks

A language model is being used for four different tasks. Three of these tasks are best addressed by providing the model with a pair of texts to analyze their relationship. One task, however, only requires a single text input. Which task is the outlier that would be handled using a single text input?

Evaluating Language Model Design