Learn Before

Model Parameter Optimization via Loss Minimization

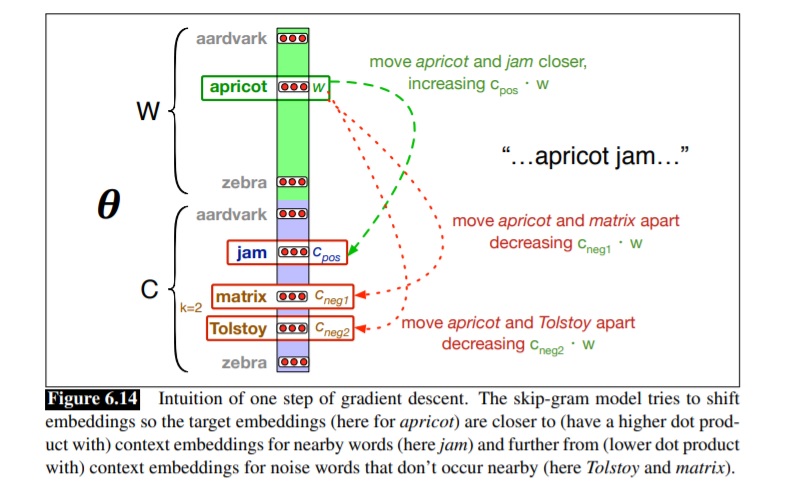

A standard procedure for training deep neural networks involves optimizing the model's parameters. This is achieved by minimizing a loss function, which quantifies the model's error on the training data. An optimization algorithm, such as stochastic gradient descent, is used to iteratively adjust the parameters to reduce this loss.

0

1

References

Speech and Language Processing (3rd ed. draft)

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Tags

Data Science

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Learn After

General Objective for Parameter Optimization via Loss Minimization

BERT Training Process

Diagnosing a Model Training Issue

A neural network is trained by repeatedly showing it examples from a dataset. Arrange the following core steps of a single training iteration into the correct logical sequence.

During the training of a neural network, an optimization algorithm iteratively adjusts the model's parameters. If the value of the loss function is consistently decreasing over many iterations, what is the most direct interpretation of this trend?

Standard Optimization Objective for Transformer Language Models