Learn Before

Masked Language Modeling

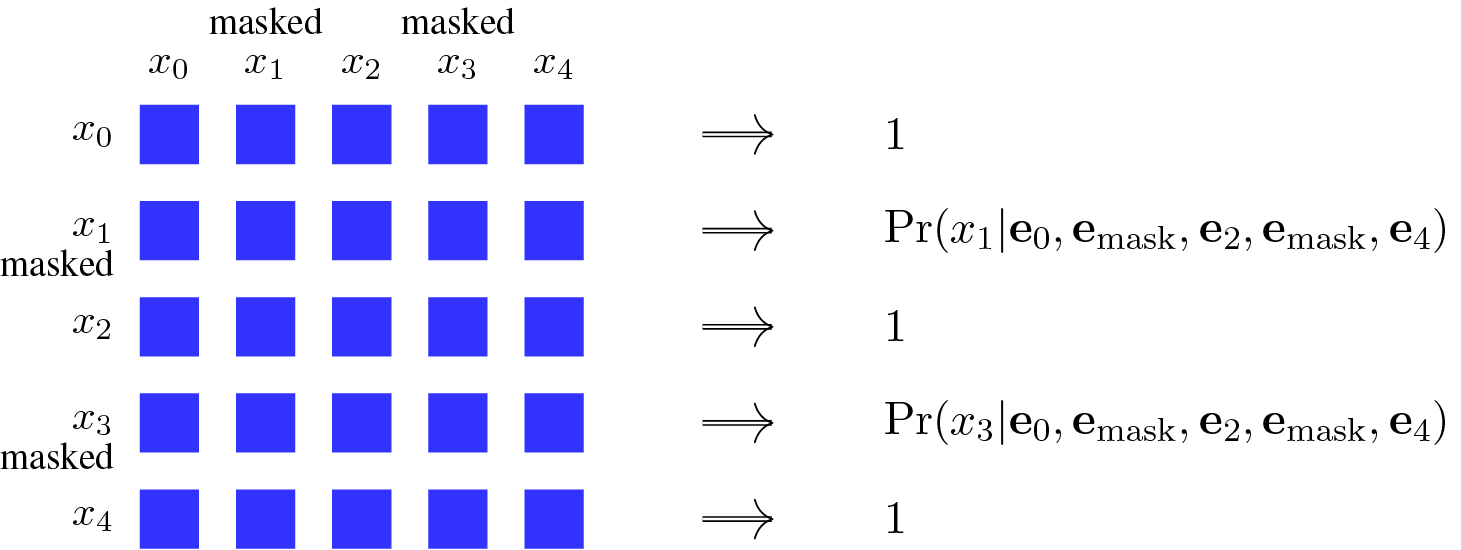

Masked Language Modeling (MLM) is a highly popular pre-training method for encoders and forms the foundation for models like BERT. The core principle involves creating a prediction task by masking some tokens in an input sequence. The model is then trained to predict these original, masked tokens by leveraging the surrounding unmasked tokens as context. This process forces the model to develop a deep, bidirectional understanding of language by considering both left and right contexts.

0

1

References

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Tags

Ch.1 Pre-training - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Output Variation in Sequence Models

Role of the [CLS] Token in Sequence Classification

Masked Language Modeling

Input Formatting with Separator Tokens

Standard Auto-Regressive Probability Factorization using Embeddings

CLS Token as a Start Symbol in Encoder Pre-training

Comparison of Context Usage in Causal vs. Masked Language Modeling

Applying the General Sequence Model Formulation

In the general formulation of a sequence model,

o = g(x_0, x_1, ..., x_m; θ), which statement best analyzes the distinct roles of the components?Match each symbol from the general sequence model formulation,

o = g(x_0, x_1, ..., x_m; θ), with its correct description.Fundamental Issues in Sequence Model Formulation

Neural Network as a Parameterized Function

Learn After

Example of a Two-Sentence Input for BERT

BERT's Masked Language Model Pre-training Process

A language model is trained on a large corpus of text. During this training, it is frequently presented with sentences where a single word has been hidden, such as: 'The scientist carefully examined the sample under the [HIDDEN]'. The model's sole objective is to predict the original, hidden word. What is the most significant advantage of this training objective for the model's understanding of language?

Bidirectional Context in Language Modeling

Analysis of a Language Model Training Objective

Selecting a Pre-training Objective Mix for a Corporate LLM

Diagnosing Pre-training Objective Mismatch from Product Failures

Choosing a Pre-training Objective Under Data Constraints and Deployment Needs

Selecting a Pre-training Objective for a Regulated Enterprise Assistant

Root-Cause Analysis of Pre-training Objective Leakage and Coherence Failures

Pre-training Objective Choice for a Multi-Modal Enterprise Writing Assistant

Your team is pre-training an internal LLM for a co...

Your team is building an internal model that must ...

Your team is pre-training a text model for an inte...

Your team is pre-training an internal LLM to suppo...

Transitioning from Masked Language Modeling to Downstream Tasks

Embedding of the MASK Symbol

Generalization of Masked Language Modeling to Autoregressive Modeling

Example of Simulating Standard Language Modeling via Masking