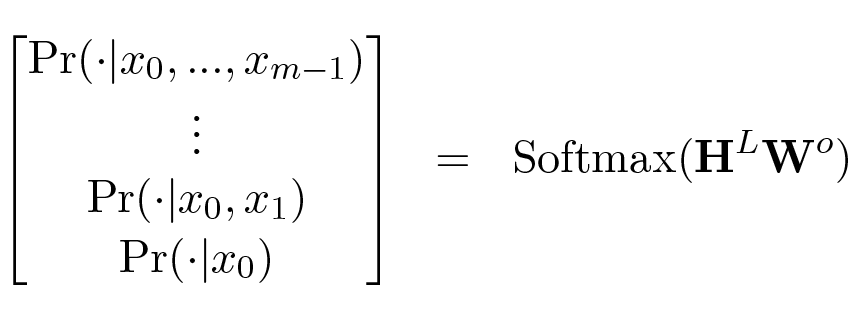

Output Probability Calculation in Transformer Language Models

In a Transformer language model comprising stacked blocks, the probability distribution for the next token is generated by applying a Softmax layer to the output of the final block. This involves multiplying the final block's output, , by a parameter weight matrix, . This operation produces a sequence of probability distributions over the vocabulary, representing the conditional probability of the next token given the preceding sequence: .

0

1

References

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Training Decoder-Only Language Models with Cross-Entropy Loss

Output Probability Calculation in Transformer Language Models

Global Nature of Standard Transformer LLMs

Processing Flow of Autoregressive Generation in a Decoder-Only Transformer

Initial Input Representation for Transformer Layers

Greedy Decoding in Language Models

Structure of a Transformer Block

A generative language model is designed to produce text by predicting the next token based solely on the sequence of tokens that came before it. If you were to adapt a standard Transformer decoder block for this specific auto-regressive task, which of its sub-layers would you remove, and why is this modification functionally necessary?

A language model is constructed using a stack of modified Transformer decoder blocks. Each block contains a self-attention sub-layer and a feed-forward network sub-layer, but lacks the sub-layer that would process information from a separate, secondary input sequence. This model is capable of performing a machine translation task, such as translating a German sentence into English, without any further architectural changes.

Function of Self-Attention in Auto-regressive Generation

Neural Network-Based Next-Token Probability Distribution

Probability Distribution Formula for an Encoder-Softmax Language Model

Output Probability Calculation in Transformer Language Models

Next-Token Probability Calculation in Autoregressive Decoders

A neural network produces a final matrix of hidden state vectors, H, with dimensions [sequence_length × hidden_dimension]. To generate a probability distribution over a vocabulary of size V for each position in the sequence, a parameterized Softmax layer is used, which computes Softmax(H ⋅ W). What is the primary role and required shape of the weight matrix W in this operation?

Debugging a Parameterized Softmax Layer

A parameterized Softmax layer is used to convert a sequence of hidden state vectors into a sequence of probability distributions over a vocabulary. Arrange the following steps of this process into the correct chronological order.

Output Probability Calculation in Transformer Language Models

A language model is tasked with predicting the next word for the sequence 'The cat sat on the'. After processing this input, the model's final linear layer produces a vector with 50,257 raw numerical scores, one for each word in its vocabulary. Which statement best characterizes this vector of raw scores, just before any final normalization function (like Softmax) is applied?

A language model has produced a vector of raw, unnormalized scores for all possible next words in its vocabulary. If a data scientist adds a constant value of 10 to every single score in this vector, the final probability assigned to each word will change.

Interpreting Model Output Scores

Output Probability Calculation in Transformer Language Models

A language model based on a standard multi-layer architecture is given an input sequence of 15 words. The model's vocabulary consists of 30,000 unique words. After processing the input through all its layers, what is the nature of the final output generated by the model's terminal probability-calculating layer for this sequence?

Analyzing Transformer Model Output

Analyzing a Language Model's Output Layer

BERT's Core Architecture

Output Probability Calculation in Transformer Language Models

Trade-offs of Model Depth

An AI team is developing solutions for two distinct tasks: Task A, which involves classifying short customer reviews as positive or negative, and Task B, which requires generating concise summaries of long, complex legal documents. They have two available models: Model X with 6 stacked processing layers and Model Y with 24 stacked processing layers. Based on the relationship between model depth and capability, which of the following strategies is most appropriate?

Analyzing the Impact of Increasing Model Layers

Learn After

Diagnosing a Language Model's Output Layer

A decoder-only language model has an internal hidden dimension of 768 and a vocabulary of 30,000 unique tokens. After processing an input sequence, the model's final layer of hidden states is multiplied by a weight matrix to produce logits, which are then passed to a final activation function. What must be the dimensions of this weight matrix and what is its primary role in this process?

From Hidden State to Probability Distribution