Pre-Norm Architecture in Transformers

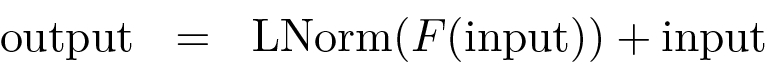

In Transformer-based systems, the pre-norm architecture is a specific sub-layer configuration where layer normalization is applied internally within a residual block. Because this approach is remarkably effective at stabilizing the training of deep neural networks, it serves as the underlying structural basis for the majority of modern Large Language Models.

0

1

References

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Reference of Foundations of Large Language Models Course

Dive into Deep Learning

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

D2L

Dive into Deep Learning @ D2L

Related

Self-attention layers' first approach

Transformers in contextual generation and summarization

Huggingface Model Summary

A Survey of Transformers (Lin et. al, 2021)

Model Usage of Transformers

Attention in vanilla Transformers

Transformer Variants (X-formers)

The Pre-training and Fine-tuning Paradigm

Architectural Categories of Pre-trained Transformers

Computational Cost of Self-Attention in Transformers

Quadratic Complexity's Impact on Transformer Inference Speed

Pre-Norm Architecture in Transformers

Critique of the Transformer Architecture's Core Limitation

A research team is building a model to summarize extremely long scientific papers. They are comparing two distinct architectural approaches:

- Approach 1: Processes the input text sequentially, token by token, updating an internal state that is passed from one step to the next.

- Approach 2: Processes all input tokens simultaneously, using a mechanism that directly relates every token to every other token in the input to determine context.

Which of the following statements best analyzes the primary trade-off between these two approaches for this specific task?

Architectural Design Choice for Machine Translation

Enablers of Universal Language Capabilities

Model Depth in Transformers

Generalization of the Language Modeling Concept

Transformer Block Sub-Layers

Standard Optimization Objective for Transformer Language Models

Scalability in Vision Transformers

Transformer Architecture Overview

Patch Embedding in Vision Transformers

Decoder-Only Transformer Architecture

Parti

Text-to-Image Model

Post-Norm Architecture in Transformers

Pre-Norm Architecture in Transformers

Learn After

Generalized Formula for Pre-Norm Architecture

A single sub-layer within a deep neural network processes an input matrix. To improve training stability, a specific architectural pattern is used where a normalization operation is applied to the output of the sub-layer's main function before it is combined with the original input via a residual connection. Arrange the following operations in the correct sequence to reflect this design.

An engineer is training a very deep sequence-processing model and observes that the gradients are becoming unstable, causing the training to fail. The current architecture of each sub-layer in the model computes its output using the formula:

output = Normalize(input + Function(input)). Which of the following modifications to the sub-layer's computational flow is most likely to resolve the instability issue by ensuring a cleaner information flow through the residual connections?Architectural Analysis for Training Stability

You’re debugging a Transformer block in an interna...

You are reviewing a teammate’s implementation of a...

You’re implementing a single Transformer block in ...

Design a Transformer Block Spec for a New Internal LLM Library (Shapes + Norm Placement)

Diagnosing a Transformer Block Refactor: Attention/FFN Shapes and Norm Placement

Choosing Pre-Norm vs Post-Norm for a Deep Transformer: Stability, Shapes, and Sub-layer Semantics

Root-Cause Analysis of Training Instability After a “Minor” Transformer Block Change

Production Bug Triage: Transformer Block Norm Placement vs Attention/FFN Interface Contracts

Post-Norm vs Pre-Norm Migration: Verifying Tensor Shapes and Correct Sub-layer Wiring

Incident Review: Silent Performance Regression After “Optimization” of a Transformer Block

Core Function in Transformer Sub-layers

Prevalence of Pre-Norm Architecture in LLMs

Vision Transformer Encoder Block