Learn Before

Rotary Positional Embeddings

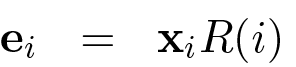

Similar to sinusoidal embeddings, rotary positional embeddings (RoPE) utilize fixed, hard-coded values to represent positions. However, instead of adding positional vectors to token embeddings, RoPE models positional context by rotating the token embeddings in a complex vector space. This results in a multiplicative integration of positional information, distinguishing it from the additive approach common in other methods.

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Learn After

Comparison of Rotary and Sinusoidal Embeddings

Conceptual Illustration of RoPE's Rotational Mechanism

Example of RoPE Capturing Relative Positional Information

Application of RoPE to d-dimensional Embeddings

Application of RoPE to Token Embeddings

RoPE as a Linear Combination of Periodic Functions

Consider two distinct methods for encoding a token's position within a sequence. Method A calculates a unique positional vector and adds it to the token's embedding. Method B applies a rotational transformation to the token's embedding, with the angle of rotation determined by the token's position. Based on these descriptions, which statement best analyzes a fundamental difference in how these two methods integrate positional context?

Positional Information in Vector Transformations

Analyzing Relative Positional Information

Selecting a Positional Strategy for a Long-Context Retrofit

Diagnosing Long-Context Failures Across Positional Schemes

Choosing and Justifying a Positional Retrofit Under Long-Context and Latency Constraints

Long-Context Retrofit Decision: RoPE Base Scaling vs ALiBi vs T5 Relative Bias

Post-Retrofit Regression: Separating Positional-Method Effects from Scaling Choices

Root-Cause Analysis of Long-Context Degradation After a Positional-Encoding Retrofit

You are reviewing a proposal to extend a productio...

You’re reviewing three proposed positional mechani...

Your team is extending a pretrained Transformer fr...

You’re debugging a long-context retrofit of a pret...

Advantage of Rotary over Sinusoidal Embeddings for Long Sequences

Formula for Multiplicative Positional Embeddings

Angle Preservation in Rotary Embeddings