Learn Before

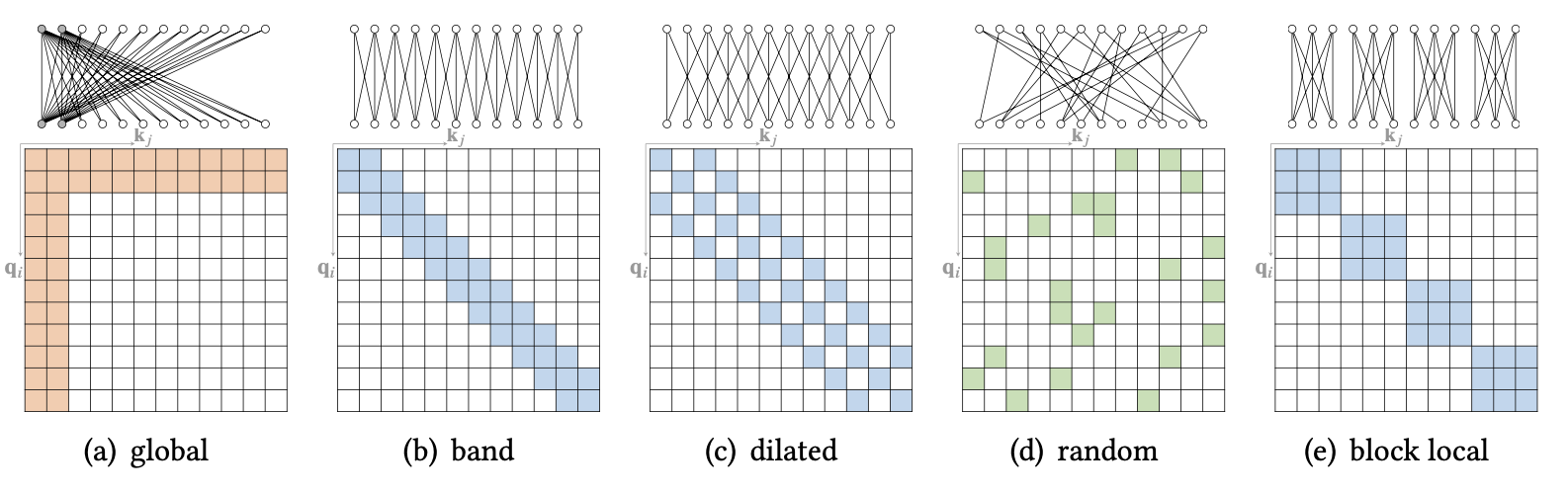

Atomic Sparse Attention Example Diagram

0

1

Tags

Data Science

Related

Atomic Sparse Attention Example Diagram

Compound Sparse Attention

Extended Sparse Attention

An engineer designs a sparse attention mechanism where, for any given token at position

i, the model is only allowed to attend to the tokens within a fixed-size window around it (e.g., from positioni-ktoi+k). This rule is applied uniformly across the entire sequence, irrespective of the specific words involved. Which statement best analyzes the core principle of this design?Analysis of a Sparse Attention Strategy

In a positional-based sparse attention mechanism, the set of tokens that a given token attends to is dynamically adjusted during processing based on the semantic similarity of the surrounding tokens.