Learn Before

Attention with Prior

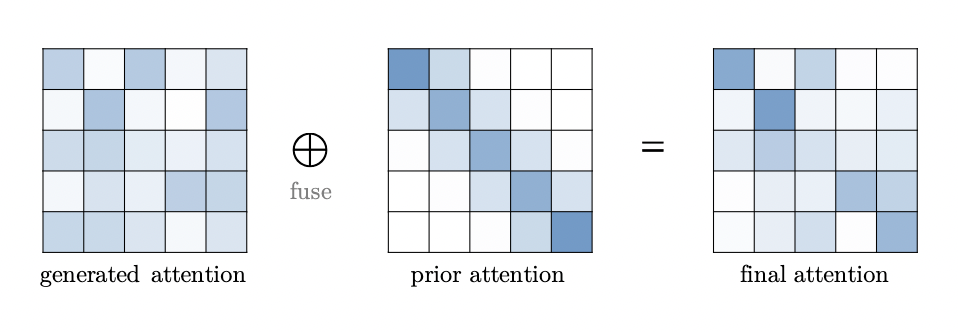

is an attention distribution that comes from a different source than previous from inputs (e.g., softmax(QK)in vanilla Transformer). Attention with prior is the fusion of two attention distributions, which can be done by computing a weighted sum of the scores corresponding to the prior and generated attention before applying softmax

0

1

Tags

Data Science

Related

Sparse Attention

Query Prototyping and Memory Compression

Low Rank Self-Attention

Attention with Prior

Improved Multi-Head Attention Mechanism

Linear Attention

A research team is working to reduce the computational cost of the attention mechanism for processing extremely long documents. Their proposed solution involves modifying the attention calculation so that each query token only computes attention scores with a small, fixed subset of key tokens (e.g., neighboring tokens and a few globally important tokens) instead of all tokens in the sequence. Which category of attention improvement best describes this approach?

Match each attention improvement strategy with its core operational principle.

Optimizing Transformer Attention for Long Sequences

Evaluating Attention Optimization Strategies for Specific Applications