Learn Before

Back Propagation Example

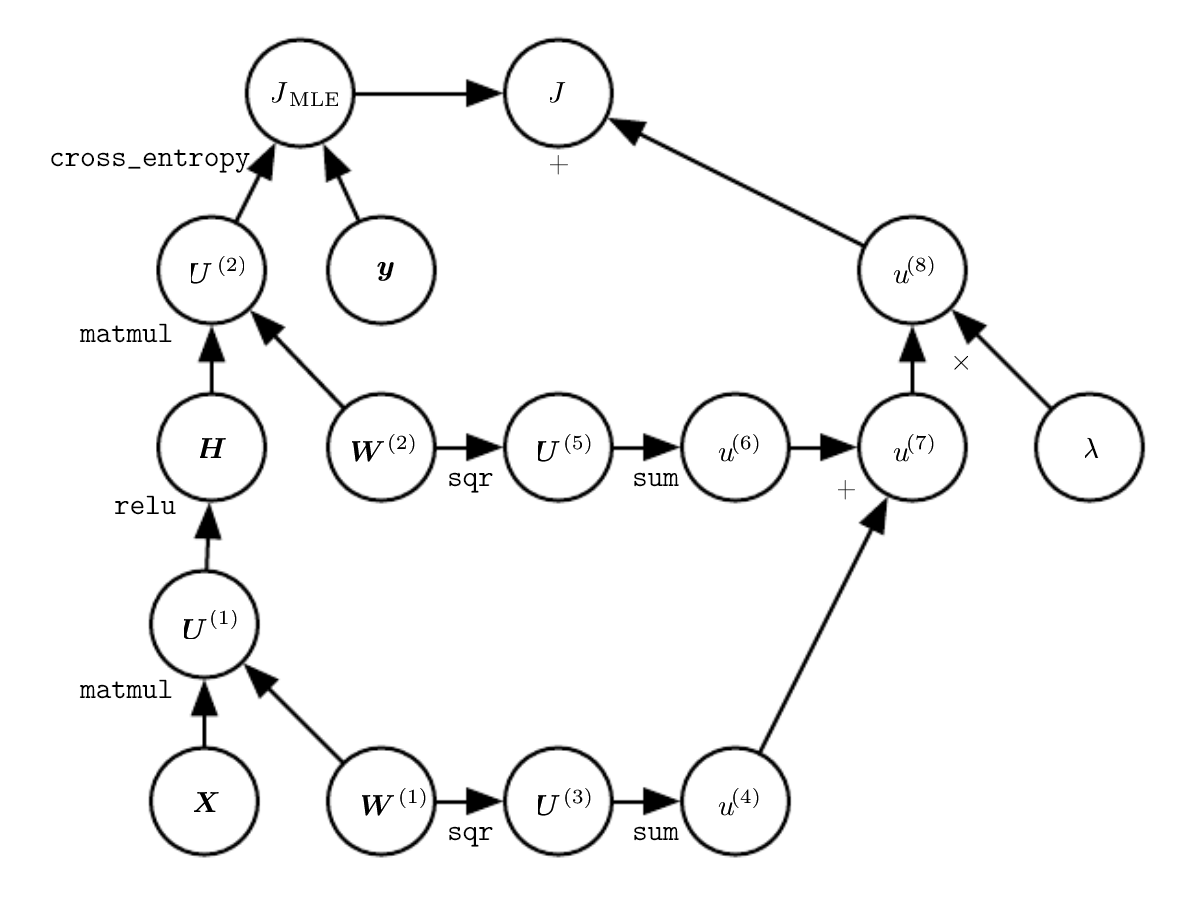

Input for a 2 layer MLP (Multi Layer Perceptron) is given as , the output is given as

Thus, there are 2 parameter matrices and for layers 1 and 2 respectively.

Layer 1 also has a hidden "relu" feature such that the output from layer 1 is constrained by

The net or total cost function is given my the cross-entropy cost added with a regularization term

This produces the following computational graph image shown below.

Compute and

Back Propagation on this example is obviously simple on the weight decay side, but not so much on the cross-entropy side.

Let

Gradient 1: Gradient 2: Gradient 3: Gradient 4:

Add and gradients to the gradients of and respectively (the values calculated from weight decay + the back propagated gradients). This results in the answers for and .

0

1

Tags

Data Science