Learn Before

Back-Propagation through Random Operations

- One straightforward way to extend neural networks to implement stochastic transformations of x is to augment the neural network with extra inputs z that are sampled from some simple probability distribution, such as a uniform or Gaussian distribution.

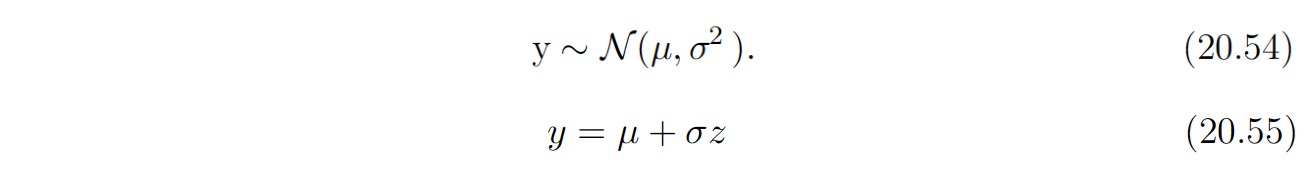

- Let us consider the operation consisting of drawing samples y from a Gaussian distribution with mean μ and variance σ^2: (refer to equation 20.54 below)

- We can rewrite the sampling process as transforming an underlying random value z ∼ N(z; 0, 1) to obtain a sample from the desired distribution: (refer to equation 20.55 below)

- We can now back-propagate through the sampling operation, by regarding it as a deterministic operation with an extra input z.

0

1

Tags

Data Science

Related

Backpropagation Through Time (BPTT)

Back-Propagating through Discrete Stochastic Operations

Neural Network Learning Rate

Back-Propagation through Random Operations

Backward Propagation Formulation

True/False: During forward propagation, in the forward function for a layer ll you need to know what is the activation function in a layer (Sigmoid, tanh, ReLU, etc.). During back propagation, the corresponding backward function also needs to know what is the activation function for layer ll, since the gradient depends on it.

Back Propagation Illustrated Example

A neural network is trained to distinguish between images of 'apples' and 'oranges'. During a training iteration, it is shown an image of an apple but predicts 'orange' with a high degree of certainty. This results in a significant error value. What is the primary computational goal of the backpropagation step that immediately follows this prediction?

Token-Level Loss Calculation in a Backward Pass

Consider a simple neural network with one input neuron, one hidden neuron, and one output neuron. The network has a weight

w1connecting the input to the hidden neuron, and a weightw2connecting the hidden neuron to the output neuron. After a forward pass, an error is calculated based on the network's final output. To updatew1using the backpropagation algorithm, you must calculate the partial derivative of the error with respect tow1. Which of the following components is essential for determining how much of the final error is attributable to the hidden neuron's activity?Allocating Gradient Memory

Chain Rule for Tensors

Storage of Intermediate Variables in Backpropagation