Learn Before

Bidirectional RNNs

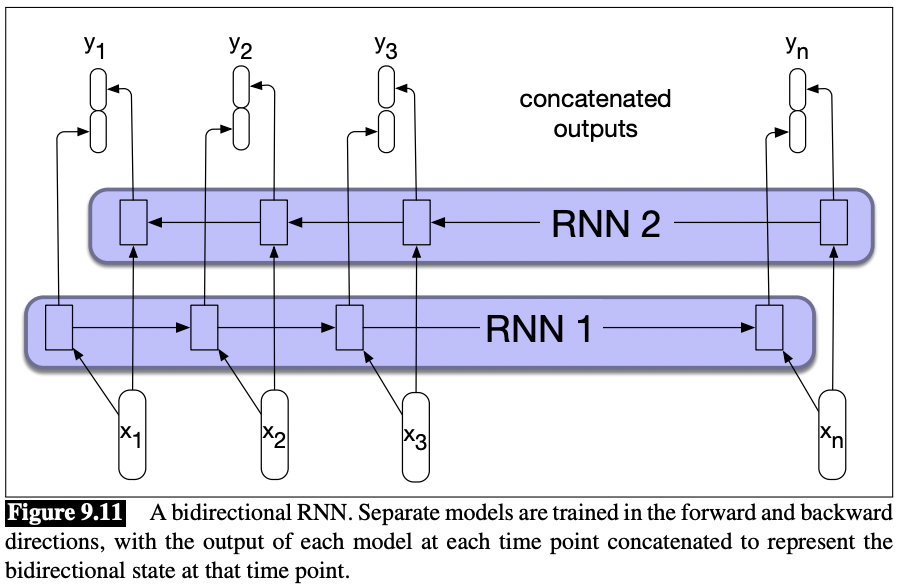

A bidirectional RNN combines two independent bidirectional RNN independent RNNs, one where the input is processed from the start to the end, and the other from the end to the start. We then concatenate the two representations computed by the networks into a single vector that captures both the left and right contexts of an input at each point in time.

Fig. 9.11 illustrates such a bidirectional network that concatenates the outputs of the forward and backward pass. Other simple ways to combine the forward and backward contexts include element-wise addition or multiplication. The output at each step in time thus captures information to the left and to the right of the current input. In sequence labeling applications, these concatenated outputs can serve as the basis for a local labeling decision.

0

1

Tags

Data Science

Related

Applications of RNN

RNN Basic Structure

RNN Extensions and Types

Loss Function for RNN

RNNs(Recurrent Neural Networks) vs HMMs (Hidden Markov Models)

RNNs vs Feedforward Neural Networks

Hybrid of Convolutional and Recurrent Neural Network

Why is an RNN (Recurrent Neural Network) used for machine translation, say translating English to French? (Check all that apply.)

RNN Problem

Different types of RNN (in terms of input/output)

Long Term Dependencies Problem

Modeling Sequences Conditioned on Context with RNNs

Leaky Units and Other Strategies for Multiple Time Scales

Convolutional Recurrent Neural Network (CRNN)

Pooling Layer in RNN

Inability of RNNs to Carry Forward Critical Information

Stacked RNNs

Bidirectional RNNs

Sequence Depth in Recurrent Neural Networks

Concise RNN Module Implementation

Vanilla RNN Implementation in Flax