Learn Before

Concept

Block-sparse Regularization

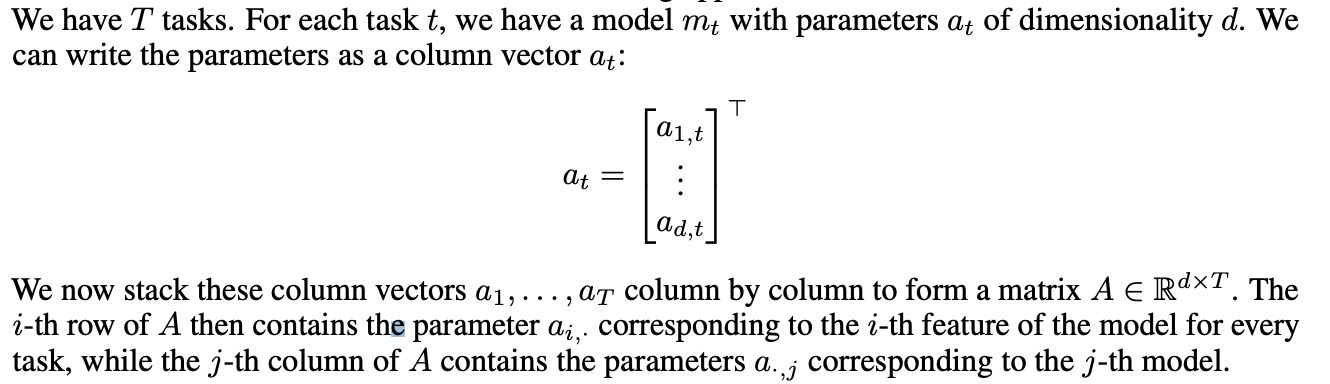

While in the single-task setting, the l1 norm is computed based on the parameter vector at of the respective task t, whereas for MTL we compute it over our task parameter matrix A. Depending on what constraint we would like to place on each row, we can use a different lq. In general, we refer to these mixed-norm constraints as l1/lq norms. They are also known as block-sparse regularization, as they lead to entire rows of A being set to 0.

0

1

Updated 2022-05-22

Tags

Data Science