Learn Before

Relation

chrF evaluation method

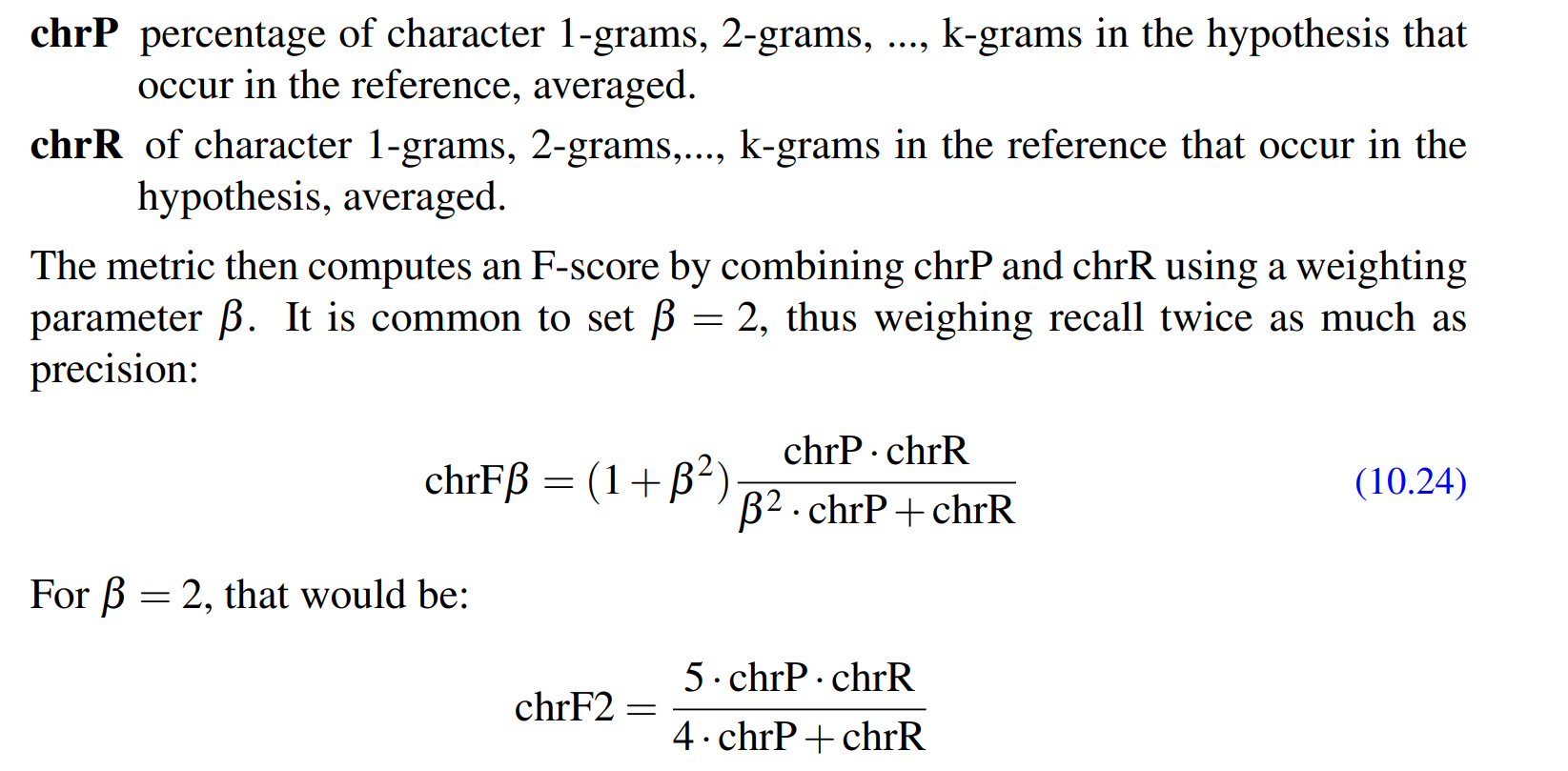

The simplest and most robust metric for MT evaluation is called chrF, which stands for character F-score. Consider a test set from a parallel corpus, in which each source sentence has both a gold human target translation and a candidate MT translation we’d like to evaluate. The chrF metric ranks each MT target sentence by a function of the number of character n-gram overlaps with the human translation. (see pic) Character or word overlap-based metrics like chrF are mainly used to compare two systems, with the goal of answering questions like: did the new algorithm we just invented improve our MT system?

0

1

Updated 2021-12-05

Tags

Data Science