Learn Before

Concept

Comparison Between Two Approaches

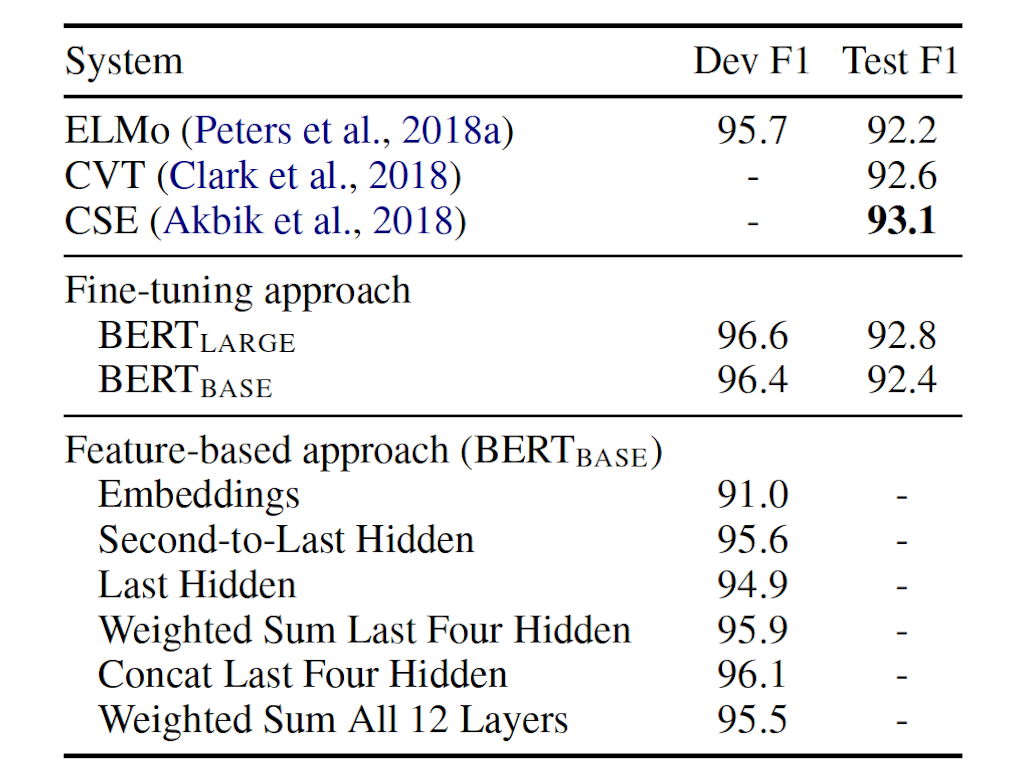

BERT is applied to the CoNLL-2003 Named Entity Recognition (NER) task. From the table we can see that there is only 0.3 F1 difference between the best performing method that concatenates the token representations from the top four hidden layers of the pre-trained Transformer and the one fine-tuningthe entire model. Therefore, BERT is effective for both fine-tuning and feature-based approaches.

0

1

Updated 2021-08-12

Tags

Data Science