Learn Before

Relation

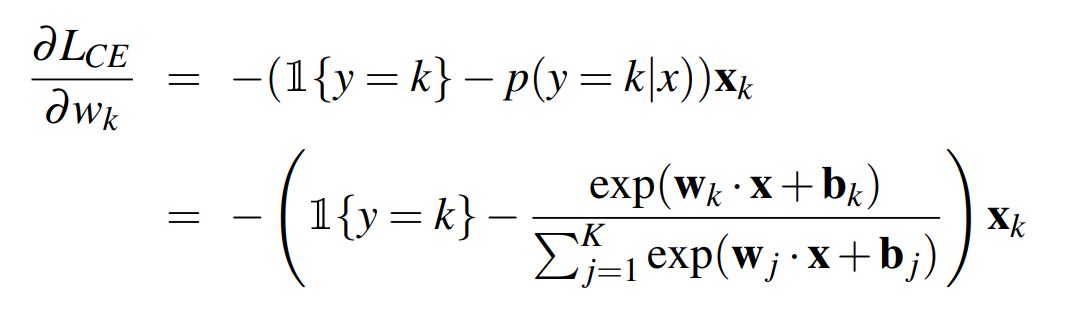

Computing the Gradient

For a network with one weight layer and sigmoid output , we could simply use the derivative of the loss that we used for logistic regression: Or for a network with one hidden layer and softmax output, we could use the derivative of the softmax loss: (the image) But these derivatives only give correct updates for the last one weight layer. The solution to computing this gradient is an algorithm called error backpropagation or backprop

0

1

Updated 2021-11-04

Tags

Data Science