Learn Before

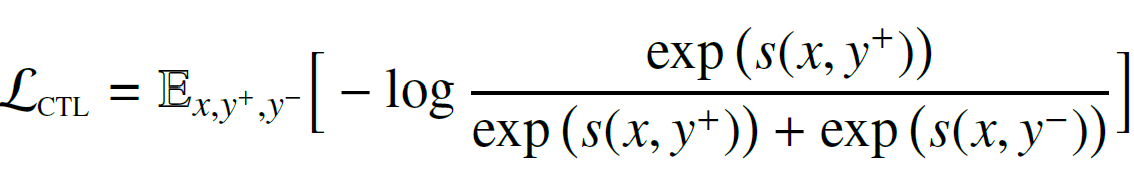

Contrastive Learning (CTL)

It assumes some observed pairs of text that are more semantically similar than randomly sampled text.

There is a score function for a text pair learned to minimize the objective function, where are a similar pair and is presumably dissimilar to .

0

1

Tags

Data Science

Related

Contrastive Learning (CTL)

Extensions of PTMs

Applying and Adapting Pre-trained Models to Downstream Tasks

Unsupervised Pre-training

Supervised Pre-training

Self-Supervised Learning

Comparison of Pre-training Paradigms

Rationale for Categorizing Pre-training Tasks by Objective

Denoising Autoencoding

Comparability of Pre-training Tasks

Generality of Pre-training Tasks and Performance

Applying Pre-trained Models to Downstream Tasks

Identifying a Pre-training Strategy

Breadth of Pre-training Tasks

A research team is developing a new language model and is considering different pre-training approaches. Match each pre-training scenario below with the correct category of learning it represents.

A language model is being trained on a large corpus of text from the internet. The training process involves randomly hiding 15% of the words in each sentence and then tasking the model with predicting the original identity of these hidden words based on the surrounding context. Which category of pre-training task does this scenario best exemplify, and why?

Comparing Pre-training Task Categories

Comparison of Pre-training Tasks