Learn Before

Cost Function

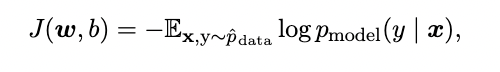

For example, the linear regression algorithm combines a dataset consisting of X and y, the cost function

The cost function typically includes at least one term that causes the learningprocess to perform statistical estimation. The most common cost function is the negative log-likelihood, so that minimizing the cost function causes maximum likelihood estimation.

In simple terms, the cost function is used to evaluate whether the parameter value W is reasonable.

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

Related

Hardware in ACM Computing Classification

Computer systems organization in ACM Computing Classification

Networks in ACM Computing Classification

Software and its engineering in ACM Computing Classification

Theory of computation in ACM Computing Classification

Mathematics of computing in ACM Computing Classification

Information systems in ACM Computing Classification

Security and privacy in ACM Computing Classification

Human-centered computing in ACM Computing Classification

Applied computing in ACM Computing Classification

Social and professional topics in ACM Computing Classification

Data science is interdisciplinary

Machine Learning references

Machine Learning Categories

Machine Learning with Python

Represent/Train/Evaluate/Refine Cycle

Machine learning and applications in healthcare

Building a Machine Learning Algorithm

Practical Methodology

Cost Function

Graph Representation Learning

Graph Representation Learning by William Hamilton

Active Learning

Machine Learning Model Parameter

Learning Algorithm

Learn After

An engineer is training two different models, Model A and Model B, on the exact same dataset to perform a specific task. The training process aims to find model parameters that minimize a cost function, where a lower value indicates a smaller error between the model's outputs and the desired outputs. After one training iteration, the engineer observes the following:

- Cost for Model A: 2.5

- Cost for Model B: 5.0

Based solely on this information, what is the most logical interpretation of the models' current performance?

Calculating Model Error

An engineer is training a predictive model and plots the value of the cost function at the end of each training iteration. The resulting graph shows a curve that starts at a high value and consistently decreases over many iterations, eventually flattening out at a very low, near-zero value. What does this trend most likely indicate about the training process?