Data Parallelism

Data parallelism is a widely used and highly convenient strategy for distributing deep learning training across multiple GPUs. In this approach, every GPU maintains a complete replica of the model and performs the identical sequence of operations, but each processes a different subset of the training minibatch. After each minibatch, the independently computed gradients are aggregated across all GPUs to synchronize and update the model parameters. To maximize efficiency, it is highly desirable to overlap computation and communication by exchanging gradients for some parameters while others are still being computed. While data parallelism enables larger effective minibatch sizes and increases overall training throughput, it is ultimately constrained by the memory of a single GPU and does not facilitate the training of larger models.

0

1

Tags

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

D2L

Dive into Deep Learning @ D2L

Related

Data Parallelism

Model Parallelism

Pipeline Parallelism

A research team is developing a novel language model with several trillion parameters. During the initial training setup, they discover that the model is too large to fit into the memory of a single available accelerator (e.g., a GPU). Which parallelism strategy is specifically designed to address this fundamental constraint?

Match each parallelism strategy with the description that best defines its core mechanism for distributing the training workload.

Diagnosing Training Inefficiency

Network Partitioning

Layerwise Partitioning

Data Parallelism

Learn After

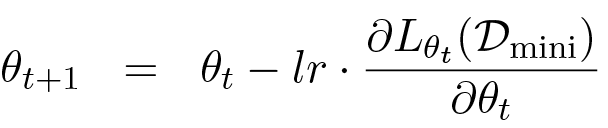

Gradient Descent Update Rule

Set of Distributed Data Batches in Data Parallelism

Ideal Speed-up in Data Parallelism

A team is training a neural network using a technique where a large batch of data is split equally among 8 machines. Each machine has a full, identical copy of the network model. During a training step, each machine processes its portion of the data and calculates a set of proposed parameter updates. Given this setup, what is the most critical subsequent action to ensure the entire system learns effectively from the full batch of data?

Distributed Gradient Calculation

A single training step is performed using a technique where a mini-batch of data is processed in parallel across multiple machines. Each machine holds a complete copy of the model. Arrange the following events in the correct chronological order for one such training step.

A machine learning team is training a large neural network on a massive dataset. To accelerate the process, they employ a strategy where the training data is split across 16 GPUs. Each GPU holds a complete copy of the model and processes its own subset of the data. After each forward and backward pass, the results from all GPUs are combined before updating the model's parameters. The team observes that while using 8 GPUs provided a nearly 8x speed-up compared to a single GPU, scaling to 16 GPUs only resulted in a 10x total speed-up. Based on the principles of the training strategy described, what is the most likely bottleneck causing this diminishing return in performance when scaling from 8 to 16 GPUs?

Evaluating a Training Strategy

Your team must train a 30B-parameter LLM on a sing...

You are on-call for an internal LLM training platf...

Your team is training a 70B-parameter LLM on 8 GPU...

You’re advising an internal platform team that mus...

Designing a Distributed Training Plan Under Memory, Throughput, and Stability Constraints

Postmortem and Redesign of a Distributed LLM Training Run with Divergence and Low GPU Utilization

Diagnosing a Scaling Regression in Hybrid Parallel LLM Training

Stabilizing and Scaling an LLM Training Job Across Two GPU Clusters

Choosing a Distributed Training Configuration After a Hardware Refresh

Selecting a Hybrid Parallelism + Mixed-Precision Strategy for a Memory-Bound LLM Training Run

Minibatch Scaling in Data Parallelism

Batch Normalization in Data Parallelism

Data Parallelism Training Process

Data Synchronization in Multi-GPU Training

Computation-to-Synchronization Ratio and Multi-GPU Scalability