Learn Before

Deep Belief Networks (DBNs)

- DBNs were one of the first nonconvolutional models to successfully admit training of deep architectures

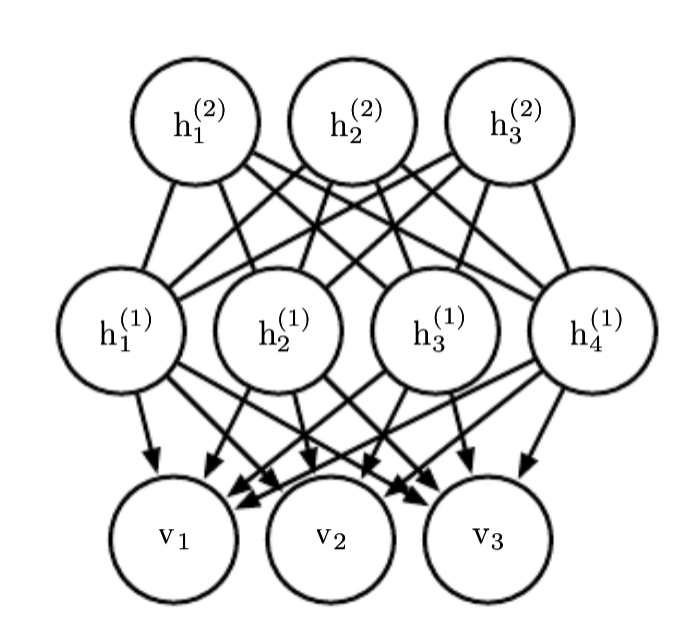

- They are generative models with several layers of latent/hidden variables in which the latent variables are typically binary, while the visible units may be binary or real.

- Every unit in each layer is connected to every unit in each neighboring layer.

- The probability distribution represented by the DBN is given by

- In the case of real-valued visible units, we substitute

- with diagonal for tractability

0

1

Tags

Data Science

Related

Probabilistic rather than Deterministic

Discriminative Modeling

Why Generative Modeling ?

Quick Recap For Some Probability Concepts

Representational Learning

Generative Modeling Architectures

David Foster's Generative Deep Learning

Deep Belief Networks (DBNs)

Evaluating Generative Models

Generative Adversarial Networks

Convolutional Generative Networks

Generative Stochastic Networks (GSNs)

Generative Model Example

How to generate samples from not complicated distributions using generator networks?

Generate samples from complicated distributions

Emitting the parameters of a conditional distribution versus directly emitting samples

Why is Generative modeling more difficult than classification or regression

Variations of generative models

Generative models