Early Stopping in Deep Learning

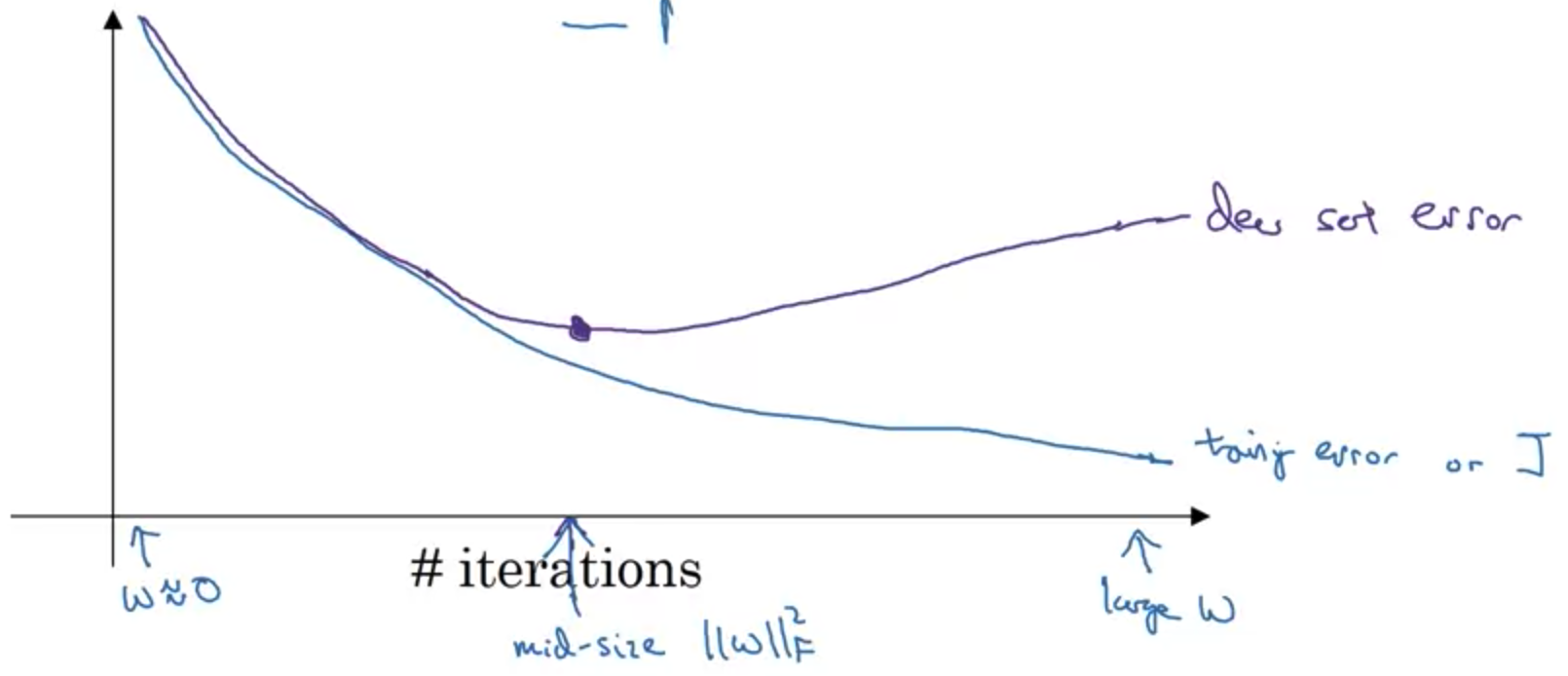

Early stopping is a classic regularization technique for deep neural networks that mitigates overfitting by constraining the number of training epochs instead of directly penalizing weight values. This approach is motivated by the fact that neural networks tend to fit clean data before memorizing noisy labels; by halting training at the optimal epoch, the model avoids interpolating noise and thereby improves generalization.

0

3

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Related

Data Augmentation in Deep Learning

Early Stopping in Deep Learning

Dropout Regularization in Deep Learning

Which of these techniques are useful for reducing variance (reducing overfitting)?

ElasticNet Regression

If your Neural Network model seems to have high variance, what of the following would be promising things to try?

Regularization in ML and DL

Bagging in Deep Learning

Dropout in Deep Learning

Normalization of Data

Tangent Distance Algorithm

Tangent Propagation Algorithm

Manifold Tangent Classifier

Boosting in Deep Learning

Appropriate Regularization/ Representation

Weight Decay

L1 Regularization

Early Stopping in Deep Learning