Learn Before

Experiment Outcomes

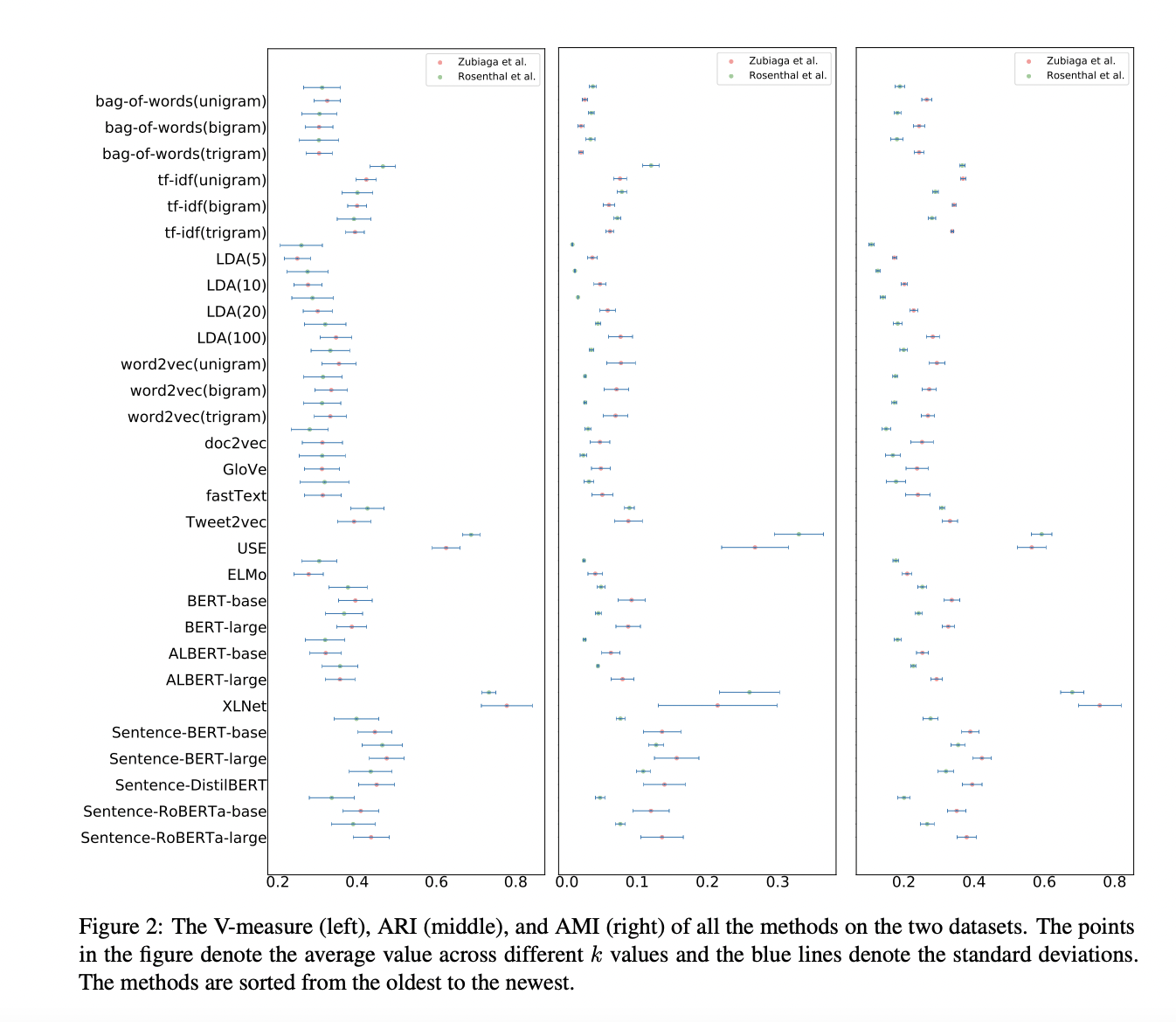

There does not seem to be a very clear trend of improvement for capturing tweet representations. The more advanced models are not necessarily the best. Notably, the BERT family of large-scale pre-trained language models (ALBERT, Sentence-BERT, etc) do not vastly or consistently outperform much simpler methods such as bag-of-words and TF-IDF. XLNet, on the other hand, seems to be the best performing method for capturing tweet representations, followed closely by USE. Interestingly, XLNet is also the most volatile with respect to the choice of k in our clustering. We think XLNet outperforms other models regarding complexity such as BERT since it uses permutation language modeling, allowing for prediction of tokens in random order.

0

1

Tags

Data Science