Learn Before

Concept

Goal of Continuation Methods

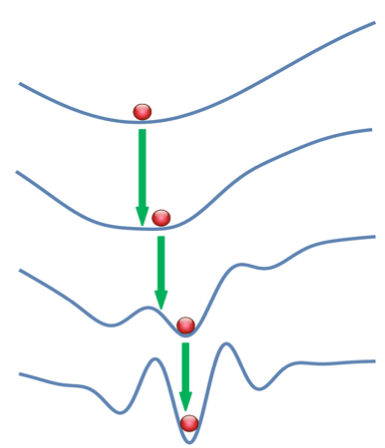

The goal to minimize the cost function is achieved with the following adaptation. A set of cost functions {} with increasing difficulty are constructed such that is the easiest to optimize and has the highest level of difficulty.

What does “easier to optimize” mean?

- It means that it is relatively more well-behaved i.e. smoother over the same region.

This gets us a good initial start point for . Continuing this would result in getting us very close to solving the optimization problem.

How do we get cost functions to behave well in the same region?

- By “blurring” out the cost function i.e. by approximating via sampling. The intended effect is that the non-convex problem starts to look like a convex one.

It is important to note that this method generally does not get us the global minima but does get us a superior local minima.

0

2

Updated 2021-06-24

Tags

Data Science