Learn Before

Implementing Multi-task Learning in Deep Learning

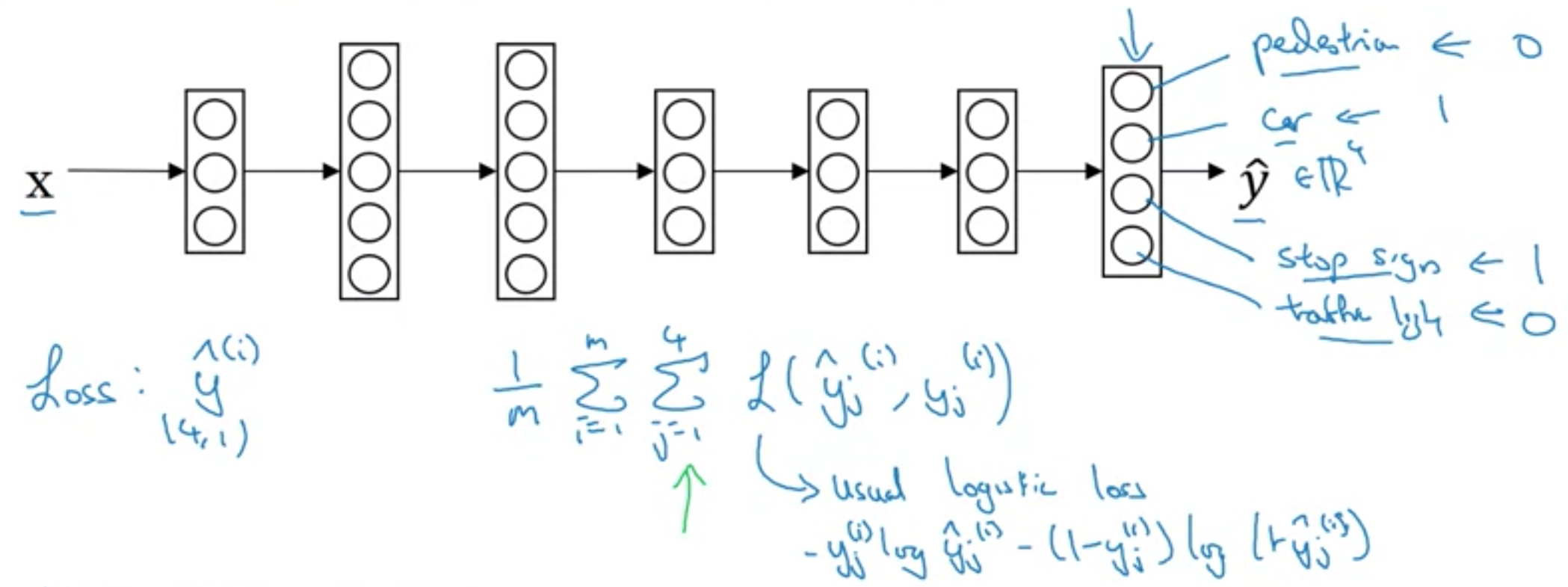

Instead of one output, we use avector of binary outputs in the final layer. For any datapoint, any number of these outputs can be 0 or 1. This is in contrast to the Softmax activation function where only one label could be true.

Because we have a series of (probably independent) outputs, the loss function is Sigmoid, similar to Logistic regression, but instead of one output variable, y insidcates a vector of binary outputs.

0

1

Tags

Data Science

Related

When Multi-Task Learning Doesn't Make Sense

An Overview of Multi-Task Learning in Deep Neural Networks

MTL Methods for Deep Learning

Implementing Multi-task Learning in Deep Learning

When Multi-task Learning in Deep Learning Makes Sense

Example of a Self-Driving Car for Multi-task Learning in Deep Learning