Information Entropy

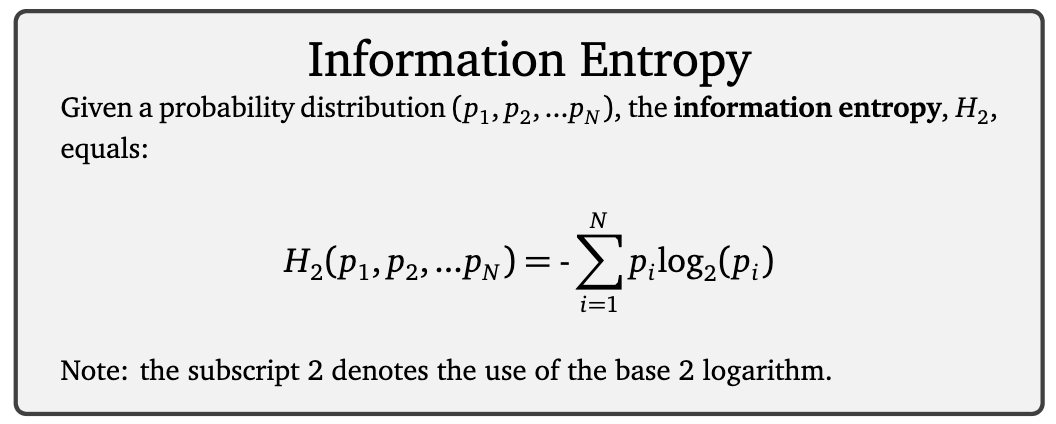

A measure of uncertainty based on the number of flips of a fair coin. Low entropy means little information is being revealed (i.e., sun rising in east) and high entropy means the outcomes are very uncertain but when actualized reveal a lot of information (i.e., a lottery ticket and reveal). For example, we would need to ask N questions to learn the sexes of N children. N questions would distinguish $2^Npotential outcome sequences (N binary random events: N flips of a fair coin). Each outcome sequence has a probability of $$1\frac{1}{2^N}$$, which is converted to N = -\log_2 (\frac{1}{2^N}) ^2\log_2 (p) $ (an approximation of the # of yes/no questions needed to identify the outcome sequence).

0

1

Tags

Complex Systems

Information Theory

Information Science

Social Science

Systems

Physical Science

Empirical Science

Science

Data Science