Introduce weight matrices in the transformer

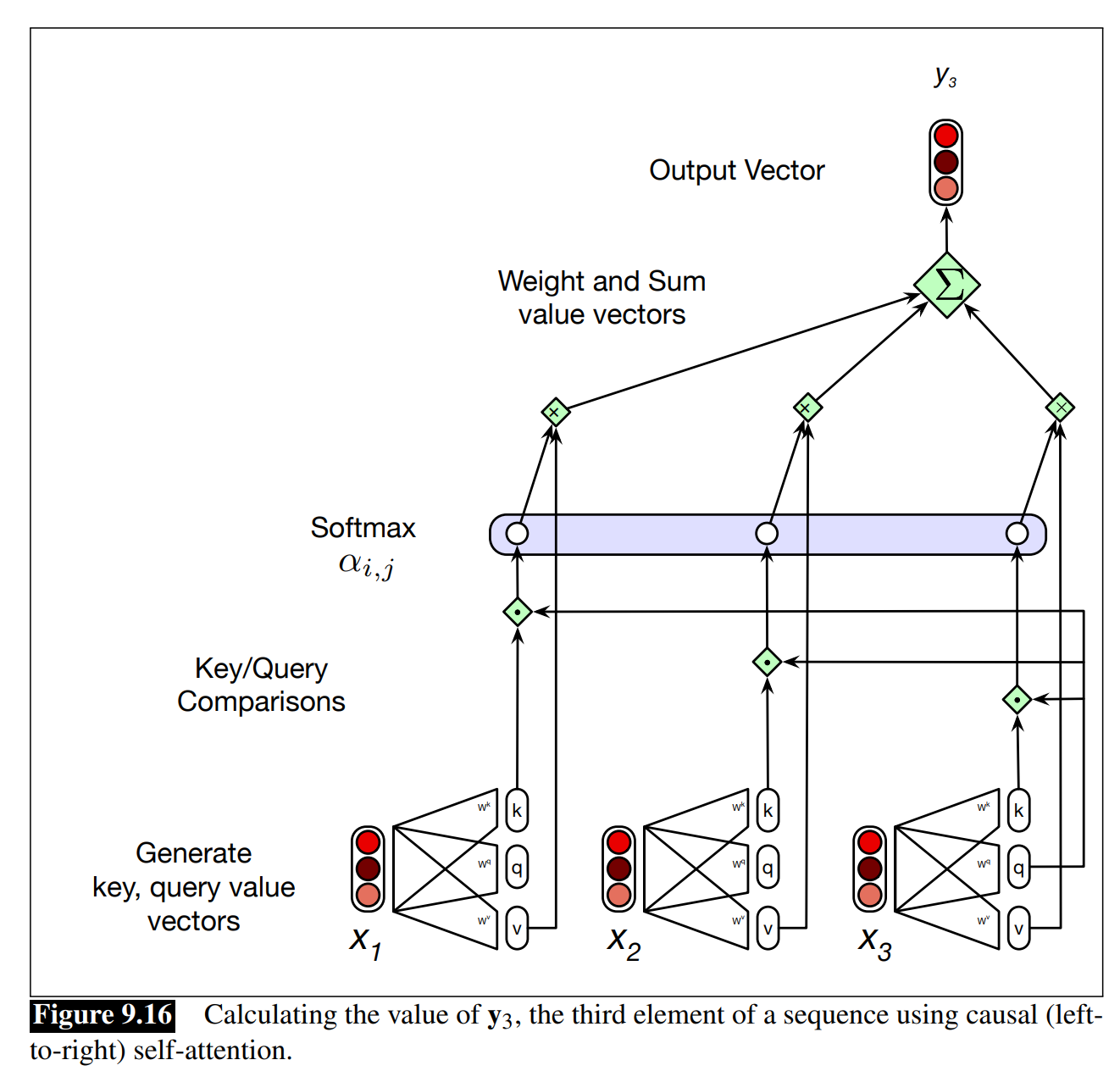

After introducing weight matrices to transformers, the output calculation for yi is now based on a weighted sum over the value vectors. To avoid effective loss of gradients during training, the dot product needs to be scaled in a suitable fashion. , where is the query vector, is the preceding element’s key vectors, and is the dimensionality of the query and key vectors. Taking this one step further, we can scale these scores, take the softmax, and then multiply the result by V resulting in a matrix of shape N ×d: a vector embedding representation for each token in the input. And we'll get the self-attention of transformer from the previous node's above node. Since at each layer we need to compute dot products between each pair of tokens in the input, it is extremely expensive for the input to a transformer to consist of long documents.

0

1

Contributors are:

Who are from:

Tags

Data Science

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Parameter Matrices for Attention Transformations

Introduce weight matrices in the transformer

Calculating an Output Vector in a Simple Sequence Model

In a simple self-attention mechanism where similarity is measured by dot product and weights are normalized by a softmax function, if a current input vector

x_iis perfectly orthogonal to a preceding input vectorx_j, thenx_jwill have zero influence on the final output vectory_i.You are calculating the output vector

y_ifor a single input vectorx_iin a sequence using a simple self-attention mechanism that only considers preceding elements. Arrange the following computational steps in the correct chronological order.Introduce weight matrices in the transformer

Generation of Query, Key, and Value Vectors in Self-Attention

In a self-attention mechanism, instead of directly comparing the raw input vectors of a sequence, each input vector is first multiplied by three separate, learned parameter matrices. This process creates three distinct representations of the original vector before they are used to calculate attention scores and output values. What is the primary analytical advantage of this approach over simply comparing the original input vectors to each other?

Learn After

An engineer is designing a self-attention layer for a text processing model. They notice that as they increase the dimensionality (

d_k) of the query and key vectors, the training process becomes unstable, and the gradients used for learning become extremely small. Which of the following best explains this phenomenon and the standard solution implemented within the attention mechanism?Calculating a Scaled Attention Score

A transformer's self-attention layer calculates an output vector for each input token. Arrange the following computational steps in the correct sequence to produce a single output vector, based on its query vector and the full set of key and value vectors for the input sequence.

Attention Score in Transformers ()