Learn Before

Self-attention layers' first approach

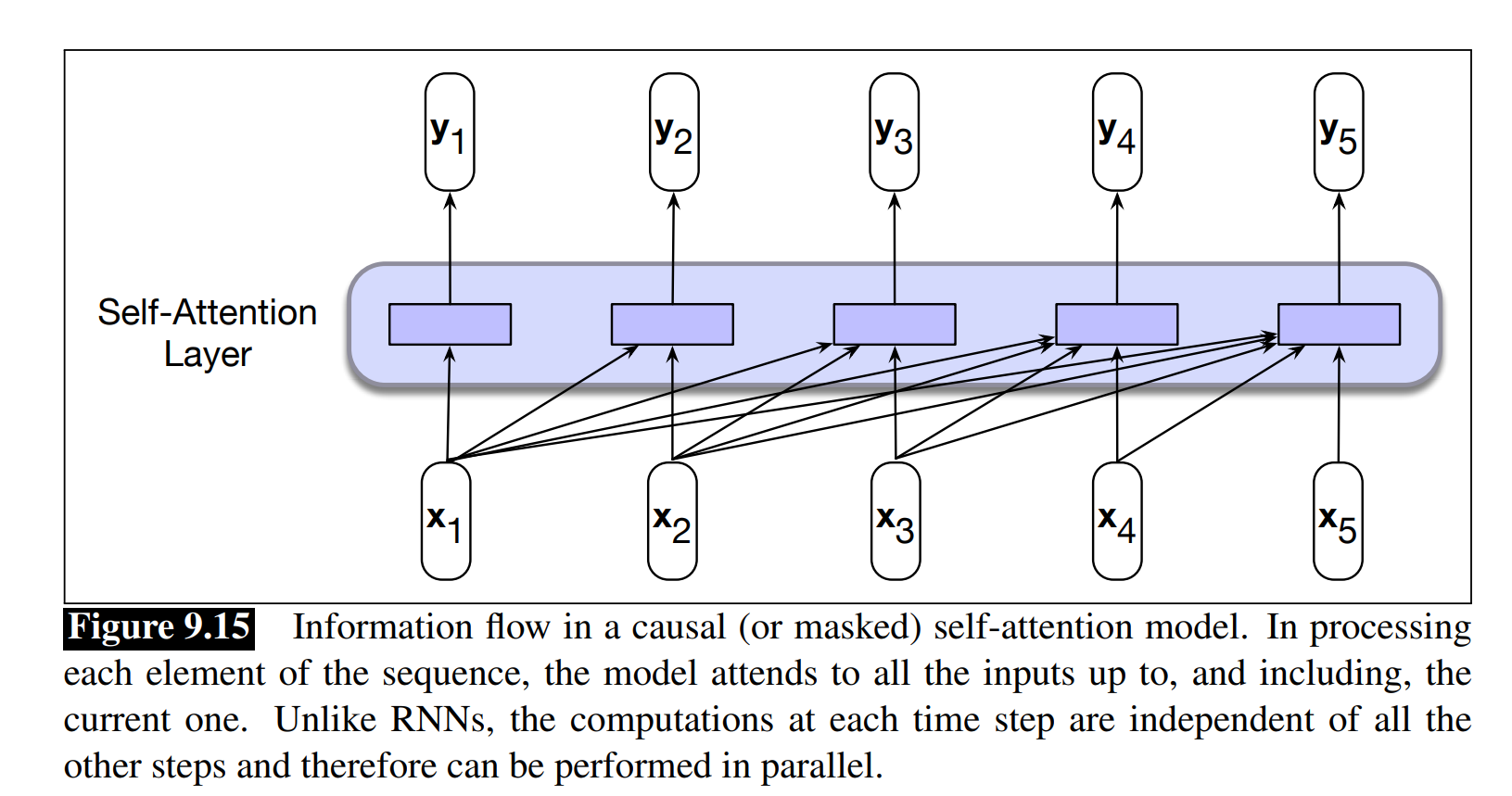

a self-attention layer maps input sequences(x1,...,xn) to output sequences of the same length (). When processing each item in the input, the model has access to all of the inputs up to and including the one under consideration, but no access to information about inputs beyond the current one. In the case of self-attention, the set of comparisons are to other elements within a given sequence. The simplest form of comparison between elements in a self-attention layer is a dot product: The larger the value the more similar the vectors that are being compared. Then to make effective use of these scores, we’ll normalize them with a softmax to create a vector of weights, , that indicates the proportional relevance of each input to the input element i that is the current focus of attention. , j ≤ i Given the proportional scores in α, we then generate an output value yi by taking the sum of the inputs seen so far, weighted by their respective α value.

0

1

Contributors are:

Who are from:

Tags

Data Science

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

Foundations of Large Language Models Course

Computing Sciences

Related

Self-attention layers' first approach

Transformers in contextual generation and summarization

Huggingface Model Summary

A Survey of Transformers (Lin et. al, 2021)

Model Usage of Transformers

Attention in vanilla Transformers

Transformer Variants (X-formers)

The Pre-training and Fine-tuning Paradigm

Architectural Categories of Pre-trained Transformers

Computational Cost of Self-Attention in Transformers

Quadratic Complexity's Impact on Transformer Inference Speed

Pre-Norm Architecture in Transformers

Critique of the Transformer Architecture's Core Limitation

A research team is building a model to summarize extremely long scientific papers. They are comparing two distinct architectural approaches:

- Approach 1: Processes the input text sequentially, token by token, updating an internal state that is passed from one step to the next.

- Approach 2: Processes all input tokens simultaneously, using a mechanism that directly relates every token to every other token in the input to determine context.

Which of the following statements best analyzes the primary trade-off between these two approaches for this specific task?

Architectural Design Choice for Machine Translation

Enablers of Universal Language Capabilities

Model Depth in Transformers

Generalization of the Language Modeling Concept

Transformer Block Sub-Layers

Standard Optimization Objective for Transformer Language Models

Scalability in Vision Transformers

Transformer Architecture Overview

Patch Embedding in Vision Transformers

Decoder-Only Transformer Architecture

Parti

Text-to-Image Model

Attention Weight Matrix (α)

Sparse Attention

Self-attention layers' first approach

In a general attention mechanism, the output is calculated as a weighted sum of the Value vectors, where the weights are determined by the interaction between Query and Key vectors. The standard formula is: . Consider a scenario where this formula is mistakenly altered to be: . What is the most significant consequence of this modification?

Dimensional Analysis of the Attention Formula

Applying the Attention Mechanism Roles

Self-Attention Output Formula for a Single Query

Self-Attention Output Formula

Learn After

Parameter Matrices for Attention Transformations

Introduce weight matrices in the transformer

Calculating an Output Vector in a Simple Sequence Model

In a simple self-attention mechanism where similarity is measured by dot product and weights are normalized by a softmax function, if a current input vector

x_iis perfectly orthogonal to a preceding input vectorx_j, thenx_jwill have zero influence on the final output vectory_i.You are calculating the output vector

y_ifor a single input vectorx_iin a sequence using a simple self-attention mechanism that only considers preceding elements. Arrange the following computational steps in the correct chronological order.