Learn Before

KNN Regression

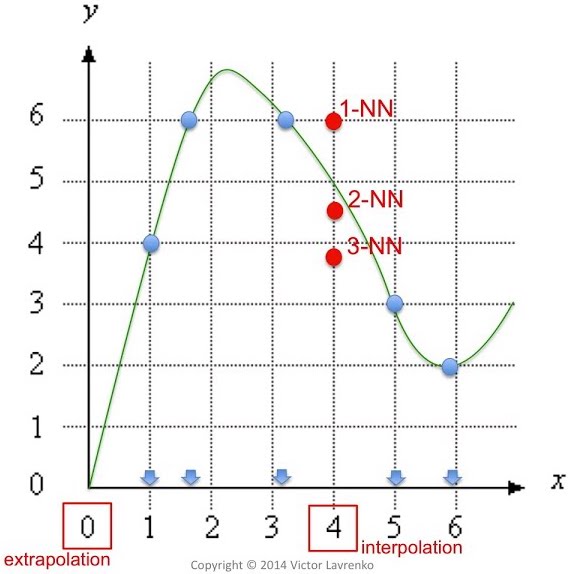

When using KNN for regression problems, the model is based off of ‘feature similarity’ which means that the output value of an observation you are trying to find is based on how closely it resembles the points in the training data set. With regression using KNN, this similarity is based on the average distance, typically using Euclidean distance, between the chosen observation and those around it.

0

3

Tags

Data Science

Related

Reference Video: K-Nearest Neighbors

Medium: Difference between K-Means and KNN

Math/Python Explanation: Difference Between K-Means and KNN

Machine Learning Basics with KNN Algorithm

KNN Regression

A Practical Introduction to K-Nearest Neighbors Algorithm for Regression (Reference)

KNN in practice

Reference video: K-Nearest Neighbors: Classification and Regression

sklearn.neighbors.KNeighborsClassifier

Classification Algorithm of K-Nearest Neighbors

K-Nearest Neighbors Advantages and Disadvantages

What class would a KNeighborsClassifer classify the new point as for k = 1 and k = 3?

Which of the following is true for the nearest neighbor classifier?

1-Nearest Neighbor Algorithm

Distance Metric Inductive Bias