Logistic Regression Cost Function

To train the parameters W and B of the logistic regression model, you need to define a cost function.

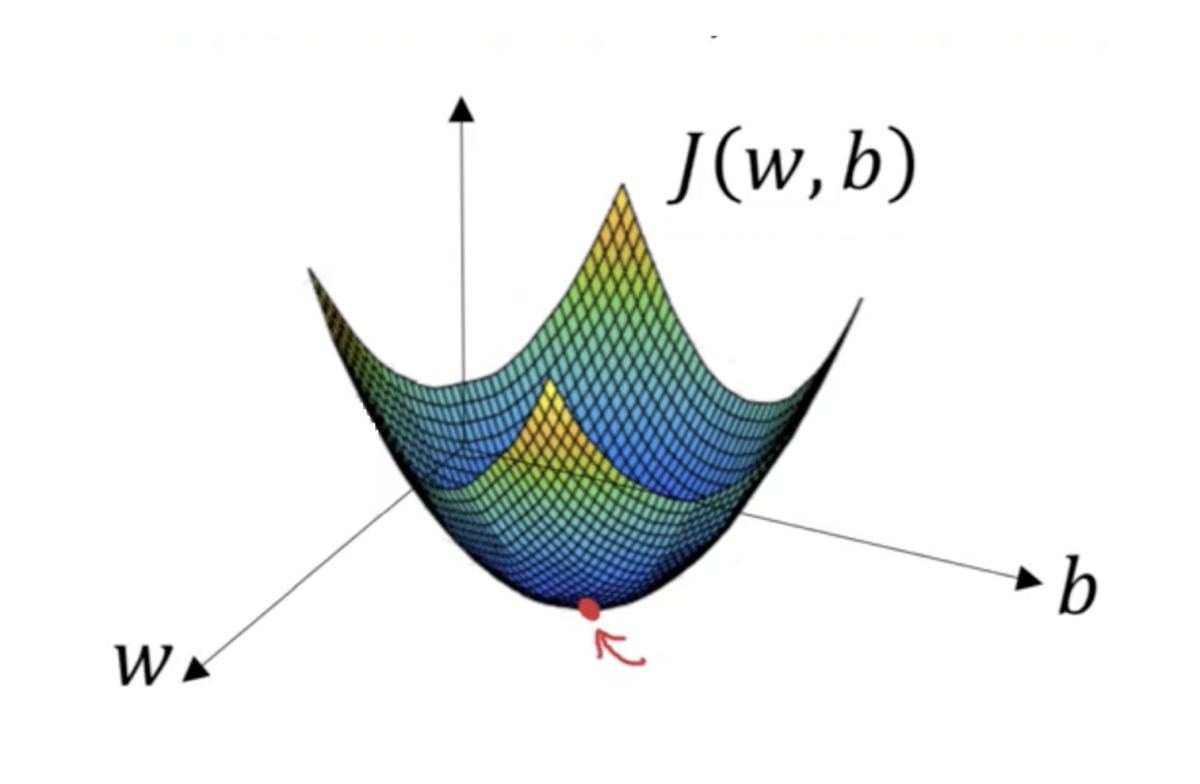

This loss function is Convex.

0

2

Contributors are:

Who are from:

Tags

Data Science

Related

Logistic regression loss function vs. cost function

Logistic Regression Cost Function

A machine learning model is trained for a binary classification task where the goal is to predict a label

y(either 0 or 1). The model's prediction,ŷ, is a probability between 0 and 1. The performance on a single example is measured using the loss function:L(ŷ, y) = -(y*log(ŷ) + (1 - y)*log(1 - ŷ)).Consider two scenarios for an example where the true label

yis 1:- Scenario A: The model predicts

ŷ = 0.9. - Scenario B: The model predicts

ŷ = 0.1.

Which scenario results in a higher loss value, and why?

- Scenario A: The model predicts

When training a logistic regression model for binary classification, the standard approach is to use the logarithmic loss function:

L(ŷ, y) = -(y*log(ŷ) + (1 - y)*log(1 - ŷ)). An alternative could be the squared error loss:L(ŷ, y) = (ŷ - y)². What is the primary reason the logarithmic loss is preferred for this task?Calculating Loss for a Single Prediction

Logistic Regression Gradient Descent Derivation

What are the parameters of logistic regression?

Logistic Regression Loss Function

Logistic Regression Cost Function

Artificial Neural Networks Formulation

Cross-entropy loss

Logistic Regression Cost Function

A machine learning model is being trained for a prediction task. A key metric, the objective function, is tracked over time. The value of this function represents the magnitude of the model's error. A graph of this process shows the objective function's value consistently decreasing as the number of training iterations increases. What is the most accurate interpretation of this trend?

Diagnosing Model Training Issues

Calculating and Interpreting a Model's Objective Function

Surrogate Objective

Loss Function

Differentiable Objectives

Second-Order Optimization Algorithm

Objective Function Curvature

Convex Quadratic Objective Function