Learn Before

Concept

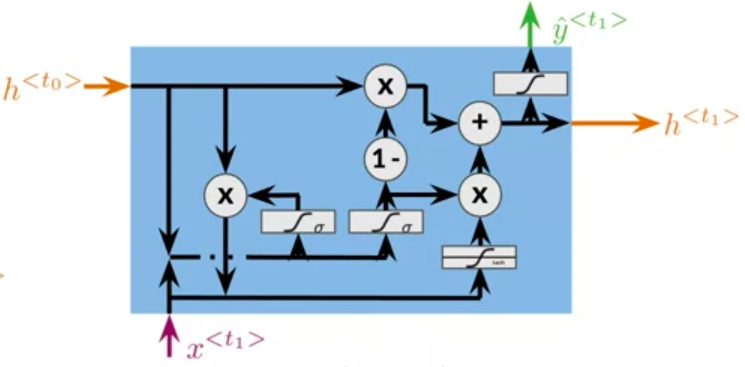

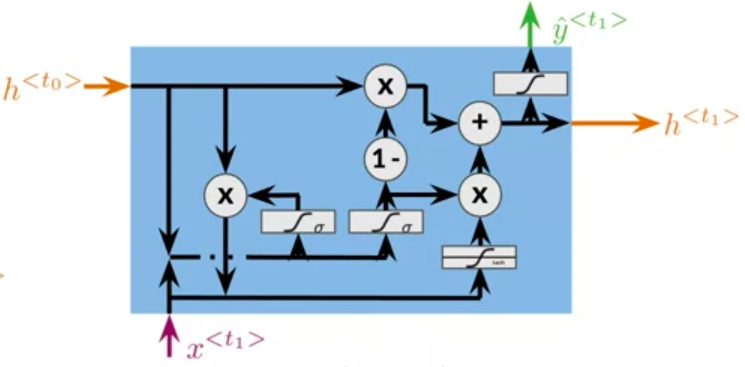

Math behind GRUs

The reset/relevance gate is

\Gamma_r=\sigma(W_r[h^{

0

1

Updated 2026-05-14

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Math behind GRUs

The reset/relevance gate is

\Gamma_r=\sigma(W_r[h^{

0

1

Contributors are:

Who are from:

Tags

Data Science

D2L

Dive into Deep Learning @ D2L