Matrix Multiplication Implementation for the forward prop

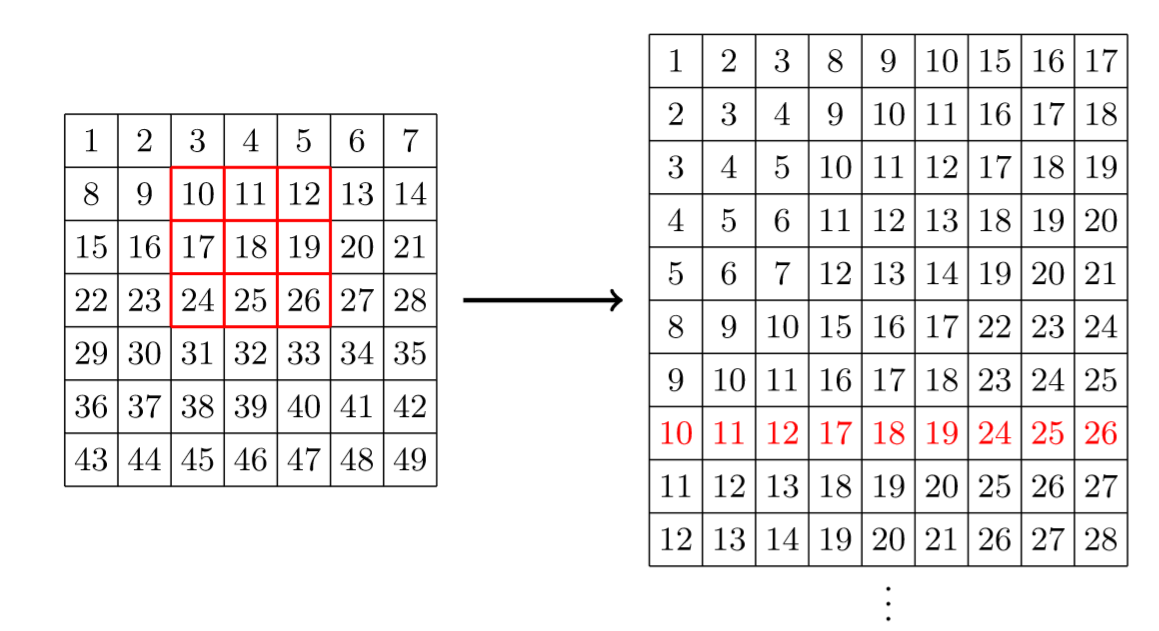

So the intuition behind this approach is to build a new matrix in which each row is each receptive field flattened. So int the end if we multiply that matrix with flattened kernel(in the same way) we get a flattened output matrix so we need just to reshape. The image shows an example of such matrix. Let's go over a code sample taken from the source(with a bit change for simplicity) that creates this matrix for a case with stride 1 and no padding

import numpy as np k1,k2 = k.shape # Kernel size(kernel k) mb, ch, n1, n2 = x.shape # batches, channels, height and width (input x)

Over here we just define dimension variables

start_idx = np.array([j + (n2)*i for i in range(0,n1-k1+1) for j in range(0,n2-k2+1) ]) # Output: numpy.array([1, ..., 33])

This is the list of all indexes that can have 0,0 index in a receptive field

grid = np.array([j + (n2)*i + (n1) * (n2) * k for k in range(0,ch) for i in range(k1) for j in range(k2)]) Output: numpy.array([0, 1,2, 7,8,9,14,15, 16])

This means a list of indexes of a any receptive field relative to the receptive field if we would flatten it

to_take = start_idx[:,None] + grid[None,:]

so over here we are adding each starting index to each index in the flattened receptive field related to itself and we would get the matrix where each row is the list of indexes of the receptive field(now relative to x) so now we can use numpy function take that takes an numpy array, and some list of indexes and changes those indexes for the value of this index in that numpy array

batch = np.array(range(0,mb)) * ch * (n1) * (n2)

list of indexces of batches

x.take(batch[:,None,None] + to_take[None,:,:])

now we using the take function so it returns the matrix we need for the convolution

0

1

Tags

Data Science