Learn Before

Mini example

Here’s the algorithm in detail for our mini example:

-

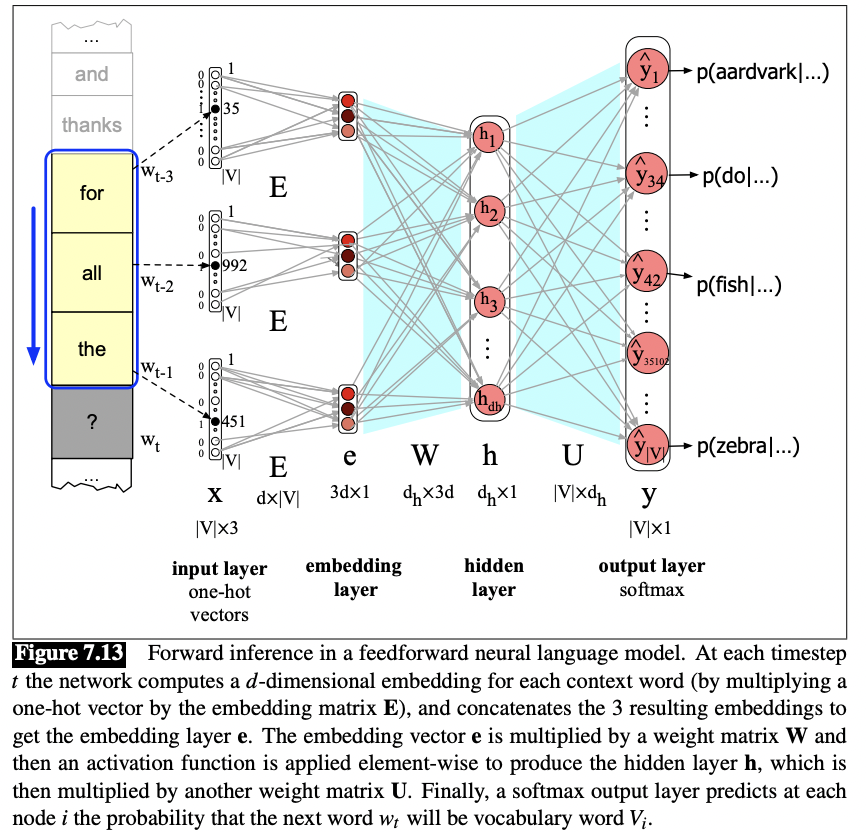

Select three embeddings from E: Given the three previous words, we look up their indices, create 3 one-hot vectors, and then multiply each by the embedding matrix E. Consider wt−3. The one-hot vector for ‘for’ (index 35) is multiplied by the embedding matrix E, to give the first part of the first hidden layer, the embedding layer. Since each column of the input matrix E is an embedding for a word, and the input is a one-hot column vector xi for word Vi, the embedding layer for input w will be Exi = ei, the embedding for word i. We now concatenate the three embeddings for the three context words to produce the embedding layer e.

-

Multiply by W: We multiply by W (and add b) and pass through the ReLU (or other) activation function to get the hidden layer h.

-

Multiply by U: h is now multiplied by U

-

Apply softmax: After the softmax, each node i in the output layer estimates the probability P(wt = i|wt−1,wt−2,wt−3)

In summary, the equations for a neural language model with a window size of 3,given one-hot input vectors for each input context word, are: e = [Ext−3;Ext−2;Ext−1] h = σ(We+b) z = Uh yˆ = softmax(z) (7.22) Note that we formed the embedding layer e by concatenating the 3 embeddings for the three context vectors; we’ll often use semicolons to mean concatenation of vectors.

0

1

Tags

Data Science

Related

Advantages vs. Disadvantages

Mini example

Bengio et al. (2003) Feed-Forward Neural Language Model

A language model is designed using a feedforward network architecture. It is trained to predict the next word by looking at a fixed-size window of the N preceding words (e.g., N=4). What is the most significant architectural limitation of this approach for modeling language?

Consider a feedforward neural network designed to predict the next word based on a fixed window of the three preceding words. Arrange the following computational steps in the correct order, from initial input to final output.

Function of Word Vector Representations