Learn Before

Neural contextual encoders

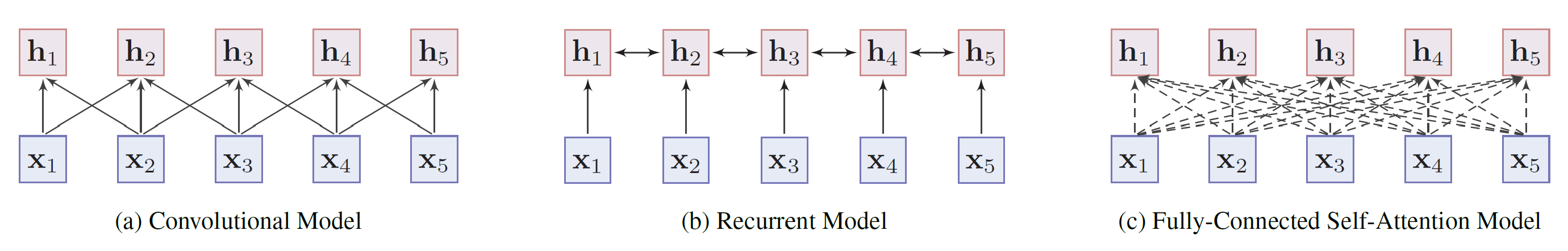

Most of the encoders can be categorized as sequence and non-sequence models.

- Sequence models: Capture a word's local context in sequential order. Examples: convolutional models (capture the meaning of words by aggregating information from neighbors), recurrent models (capture contextual representations with short memory). They learn the contextual representation of the word with locality bias, but are easy to train.

- Non-sequence models: Learn the contextual representation with a pre-defined tree or graph structure between words. Example: Fully-connected self-attention model (Use a fully-connected graph to model the relation of every two words and let the model learn the structure by itself).

0

1

Tags

Data Science

Related

Pre-trained Models for Natural Language Processing: A Survey

Word embedding (NLP) definition

Neural contextual encoders

Model analysis: Knowledge captured by PTMs

Evolution of Word Embedding Techniques

Shift from Word to Sequence Representations

Evolution and Adoption of Word Embeddings

An engineer is developing a language model for a vocabulary of 100,000 unique words. They are considering two approaches for representing words as input to the model: a one-hot encoding scheme (where each word is a 100,000-dimensional vector with a single '1' and the rest '0's) and a pre-trained 300-dimensional word embedding scheme. Which of the following statements provides the most accurate analysis of the primary advantage of using the word embedding approach in this scenario?

Analyzing Word Representation Methods

Improving Model Generalization

Learning Word Embeddings via Word Prediction Tasks

Sequence Representation via Language Models

Word Vectors

Subword Embedding