Purpose and Structure of the Feed-Forward Network (FFN) in Transformers

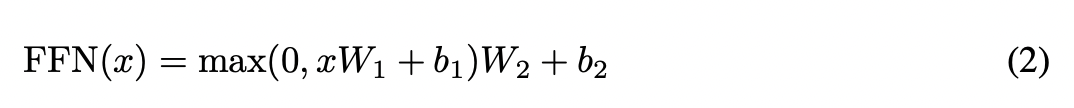

In Transformer models, the Feed-Forward Network (FFN) sub-layer plays a crucial role by introducing non-linearities into the representation learning process. This function is vital for preventing the representations learned by the self-attention mechanism from degenerating. Structurally, a standard FFN consists of two fully connected layers. The first layer typically uses a non-linear activation function like ReLU, while the second is a linear layer.

0

1

Contributors are:

Who are from:

Tags

Data Science

Foundations of Large Language Models Course

Computing Sciences

Ch.2 Generative Models - Foundations of Large Language Models

Foundations of Large Language Models

D2L

Dive into Deep Learning @ D2L

Related

Multi-Head Self-Attention Function

Purpose and Structure of the Feed-Forward Network (FFN) in Transformers

A standard processing block in a Transformer model consists of two main sub-layers applied in sequence. The first sub-layer's primary role is to relate different positions of the input sequence to compute a new representation for each position. The second sub-layer then applies an identical non-linear transformation to each position's representation independently. How does the core computational function, denoted as

F(·), implemented within each of these sub-layers, differ?A standard processing block in a certain neural network architecture consists of two main sub-layers. Each sub-layer's computation can be described as applying a core function,

F(·), within a structure that also includes a residual connection and layer normalization. Match each sub-layer type with the correct description of its core computational function,F(·).Identifying Core Functions in a Transformer Block

Scaled Dot-Product Attention

Encoder Structure of Transformer

Decoder Structure of Transformer

Purpose and Structure of the Feed-Forward Network (FFN) in Transformers

Self-Attention as a Source of Inference Difficulty in Transformers

Learn After

Feed-Forward Network (FFN) Formula and Component Dimensions in Transformers

An engineer is building a deep neural network for sequence processing. Each layer of the network consists of a self-attention mechanism followed by a position-wise sub-layer. The engineer designs this position-wise sub-layer to be composed of two consecutive linear transformations. What is the most significant negative consequence of omitting a non-linear activation function between these two linear transformations?

Analysis of a Position-Wise Sub-Layer

A researcher modifies the position-wise sub-layer within a sequence processing model. The standard design for this sub-layer is a sequence of: a linear transformation, a non-linear activation, and a second linear transformation. The researcher's modification adds a second non-linear activation function immediately after the final linear transformation. Which of the following best evaluates the impact of this architectural change?

FFN Hidden Size in Transformers

Positionwise Nature of Transformer Feed-Forward Networks

MLP of the Vision Transformer Encoder