Learn Before

Relation

Random Initialization

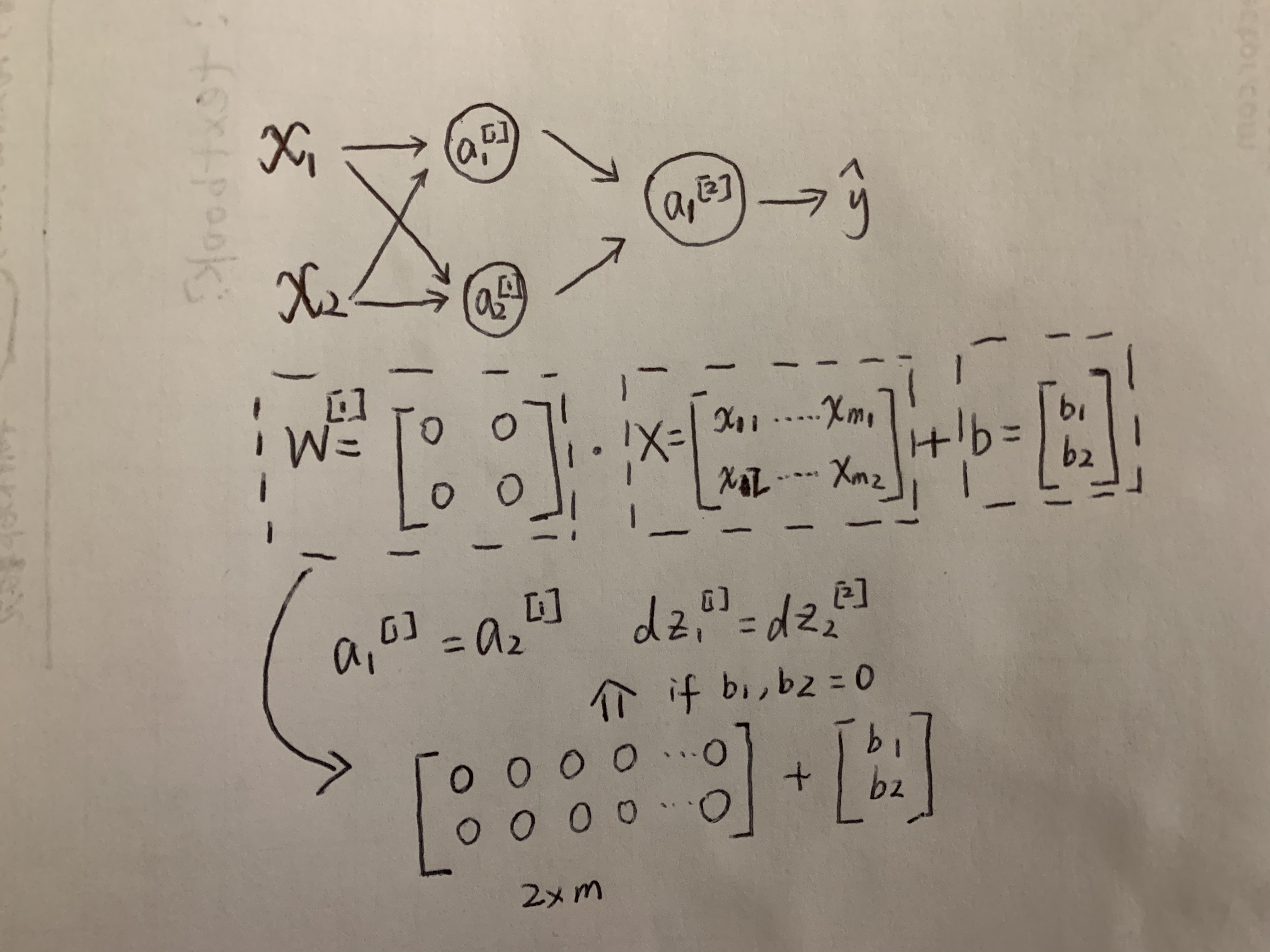

For a neural network, you shouldn't initialize weights to parameters to all zero. Why is that? Let's take an example: you have two input features, x1 and x2, and two hidden units. So the matrix of this layer's parameter W will be a 2 by 2 matrix. If we initialize W to be full of 0, as it's shown in the image, a1=a2, and dz1=dz2 and these two neurons will compute exactly the same thing. And no matter how many layers you have, these two neurons will have same outputs.

To solve this problem, we can initialize our parameters by using np.random.randint((m,n))*0.01 multiple by 0.01 is to initlize a small number.

0

4

Updated 2021-11-19

Tags

Data Science