Learn Before

Concept

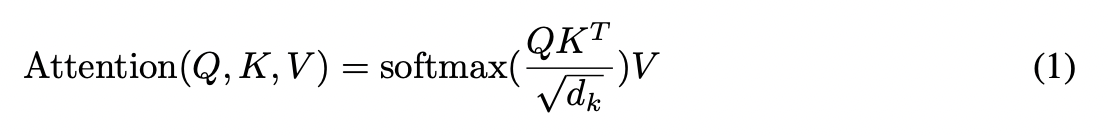

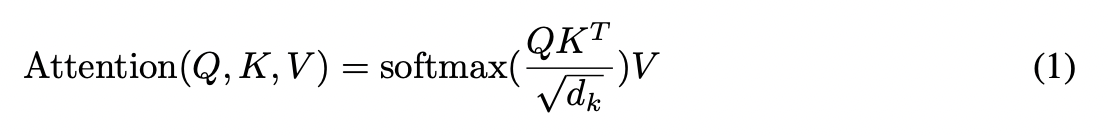

Self-Attention of Transformer

In the encoder, the data will first go through a module called ‘self-attention’ to get a weighted feature x.

0

1

Updated 2026-04-15

Contributors are:

Who are from:

Tags

Data Science

Self-Attention of Transformer

In the encoder, the data will first go through a module called ‘self-attention’ to get a weighted feature x.

0

1

Contributors are:

Who are from:

Tags

Data Science