Learn Before

Softmax Regression (Activation)

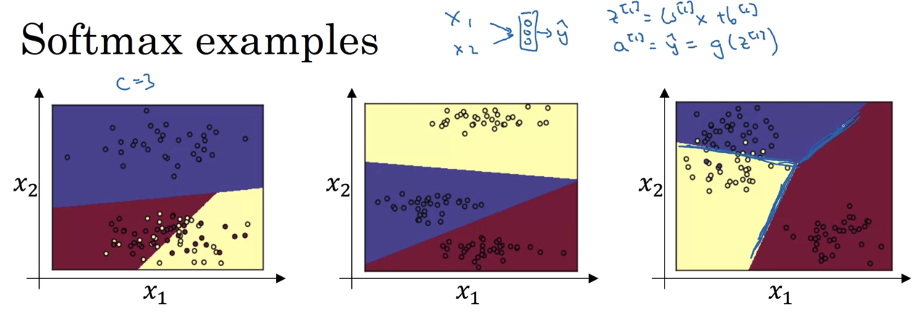

Softmax regression is the generalization of logistic regression to multiple classes. In other words, each data point belongs to one of multiple classes (rather than just two options, as is the case for logistic regression). Hence softmax regression is also called multi-class logistic regression or multinomial logistic regression.

0

3

Contributors are:

Who are from:

Tags

Data Science

Related

OLS fitting cannot be used for classification

Using LDA vs Logistic Regression

Logistic Regression Videos

Binary Classification Metrics

Hypothesis

Hypothesis function

Logistic Regression Formulation

Logistic Regression Mathematical Equation

Logistic Regression - Regularization

Linear Regression vs Logistic Regression

Softmax Regression (Activation)

Pros and Cons of Softmax Function

Softmax Regression (Activation)

Parameterized Softmax Layer

Plackett-Luce Selection Probability Formula

Conditional Probability Formula for Autoregressive Models using Softmax

A neural network's final layer produces the raw output scores (logits)

[2.0, 1.0, 0.1]for three possible classes. To convert these scores into class probabilities, a function is applied that first exponentiates each score and then normalizes these new values by dividing each by their sum. What is the resulting probability distribution? (Values are rounded to three decimal places).A function is used to convert a vector of raw, unnormalized scores

z = [z_1, z_2, ..., z_K]into a probability distribution. This function operates by first applying the standard exponential function to each score and then normalizing these new values by dividing each by their sum. If a constant valueCis added to every score in the input vectorz, resulting in a new vectorz' = [z_1+C, z_2+C, ..., z_K+C], how will the resulting output probability distribution be affected?Consider two input vectors of raw scores (logits) for a 3-class classification problem: Vector A =

[1, 2, 3]and Vector B =[1, 5, 10]. Both vectors are passed through a function that exponentiates each score and then normalizes the results by dividing by their sum. How will the resulting probability distribution for Vector B compare to the one for Vector A?You’re reviewing an internal evaluation script tha...

Your team is building an internal tool that ranks ...

You’re reviewing an internal LLM evaluation pipeli...

Reconciling Training Log-Likelihood with Inference-Time Sequence Selection

Explaining a Counterintuitive Decoding Outcome Using Softmax, Next-Token Conditionals, and Sequence Log-Probability

Diagnosing a “High-Confidence Wrong Token” Bug in Autoregressive Scoring

Investigating a Production Scoring Bug: Softmax Normalization vs. Autoregressive Sequence Log-Probability

Design a Correct Sequence-Scoring Function for Autoregressive LLM Outputs

Root-Cause Analysis: Why a “More Likely” Token-by-Token Completion Loses on Total Sequence Score

Auditing a Candidate Completion Using Softmax Next-Token Probabilities and Autoregressive Log-Probability

Derivative of Softmax Cross-Entropy Loss with Respect to Logits

Numerical Overflow in Softmax Function

Learn After

What is Softmax Regression and How is it Related to Logistic Regression?

The Softmax Function, Simplified

Output Layer of Softmax Regression

Implementation of Softmax Regression Using Numpy

Implementation of Softmax Regression Using Tensorflow

Cross-Entropy Loss for Softmax Regression

Vectorized Minibatch Softmax Regression