Learn Before

Concept

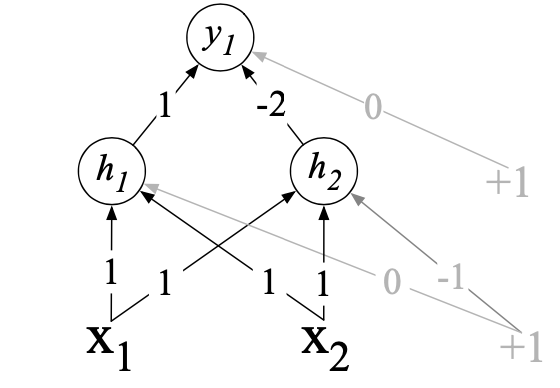

Solution to the XOR Problem: Neural Networks

While the XOR function cannot be calculated by a single perceptron, it can be calculated by a layered network of units; for example, using two layers of ReLU-based units. As shown in the figure below, where the numbers on the arrows represent the weights for each unit and and form a hidden layer, which represents the input in a new linearly separable space.

Note that the solution to the XOR problem requires a network of units with non-linear activation functions. This is because a network formed by many layers of purely linear units can always be reduced a single layer of linear units with appropriate weights.

0

1

Updated 2021-10-23

Tags

Data Science