Learn Before

Supertagging

Supertagging is the corresponding task for highly lexicalized grammar frameworks, where the assigned tags often dictate much of the derivation for a sentence. As with traditional part-of-speech tagging, the standard approach to building a CCG supertagger is to use supervised machine learning to build a sequence labeler from hand-annotated training data. To find the most likely sequence of tags given a sentence, it is most common to use a neural sequence model, either RNN or Transformer. A probability distribution over the possible supertags for each word in the input will be returned.

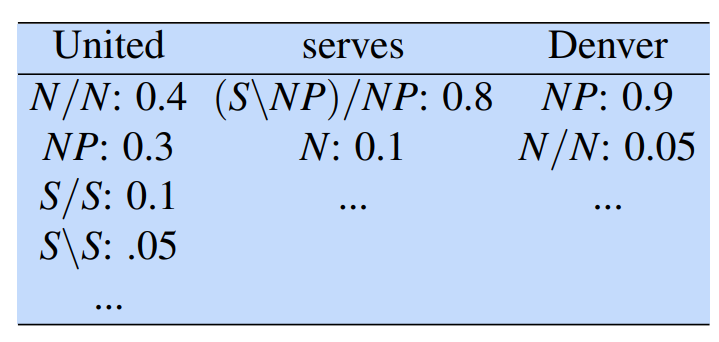

The following table illustrates an example distribution for a simple sentence, in which each column represents the probability of each supertag for a given word in the context of the input sentence. To get the probability of each possible word/tag pair, we’ll need to sum the probabilities of all the supertag sequences that contain that tag at that location. This can be done with the forward-backward algorithm that is also used to train the CRF.

0

1

Tags

Data Science