Learn Before

Concept

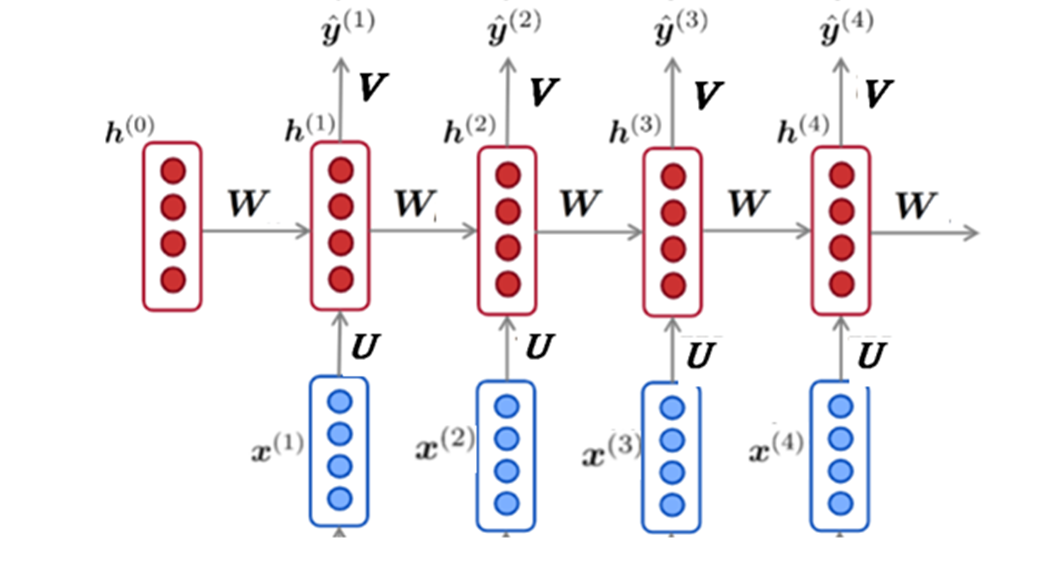

Unrolled RNN Structure

The network looks at a series of inputs over time, X0, X1, X2, until Xt. For example, this could be a sequence of words in a sentence. The neural network has one layer of neurons for each input.

At each stage in the sequence, each layer of neurons generates two things:

- An output——this is the model’s prediction for what should be the next element in the sequence

- A hidden state——this is the network’s “short-term memory”. The hidden state is an activation function, which takes as its input both the hidden state of the previous step and the output of the current step. This allows the model to carry over information from previous steps to the current step.

0

1

Updated 2020-10-03

Tags

Data Science