Learn Before

Concept

Variants of attention

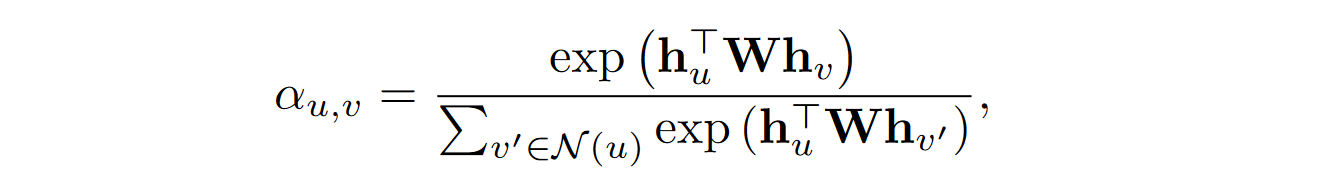

The GAT-style attention computation is known to work well with graph data. However, in principle any standard attention model from the deep learning literature at large can be used. Popular variants of attention include the bilinear attention model and variations of attention layers using MLPs.

Adding attention is a useful strategy for increasing the representational capacity of a GNN model, especially in cases where you have prior knowledge to indicate that some neighbors might be more informative than others.

0

1

Updated 2022-07-02

Tags

Data Science