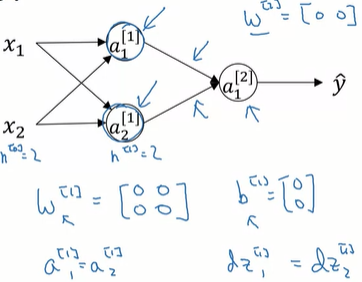

Zero Weight Initialization in Feed-Forward Networks

If we initialize the weight matrix as a matrix of zeros, then the gradient in each neuron from each layer will receive the exact same value. No matter how long the network is trained, gradient descent will update these parameters identically, preventing the neurons from learning distinct features. This failure to differentiate neuron weights is known as the symmetry problem. Furthermore, because the weights are zero, the gradients propagated backward to earlier layers are multiplied by zero, immediately contributing to the vanishing gradient problem.

0

1

Contributors are:

Who are from:

Tags

Data Science

Related

Solutions for vanishing/exploding gradient

A Gentle Introduction to Exploding Gradients in Neural Networks

Zero Weight Initialization in Feed-Forward Networks

Impact of Exploding Gradients on Model Training

Vanishing Gradient of the Tanh Activation Function

Reparametrization to Mitigate Stalling Optimization

Mathematical Mechanism of Vanishing and Exploding Gradients in Recurrent Neural Networks

Suppose you have built a neural network. You decide to initialize the weights and biases to be zero. Which of the following statements is true?

The Problem with Constant Initialization

Zero Weight Initialization in Feed-Forward Networks