Vanishing/exploding gradient

In a neural network with many time steps or layers, a gradient at the early layer is the product of all the terms from the later layers, which leads to an inherently unstable situation. Especially when the value of gradient has become so small, it no longer updates properly or is vanished eventually. Exploding gradient can be considered as the opposite of vanishing process. The updated weights using gradient descent become so large that they cause the whole network to become unstable, which leads to numerical overflow.

0

3

Tags

Data Science

D2L

Dive into Deep Learning @ D2L

Related

Vanishing/exploding gradient

Helpful Website for BPTT

Weight typing

Computational Graph of RNN Backpropagation Through Time

Gradient of RNN Objective with Respect to Output Weights

Teacher Forcing

Gradient Descent Reference

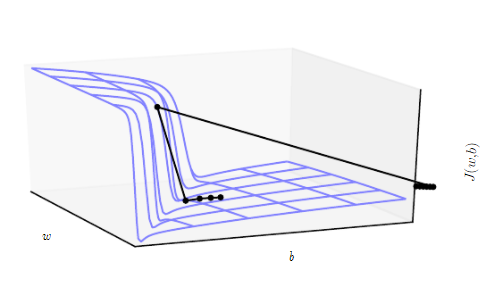

Linear Regression and Gradient Descent

Numerical Approximation of Gradients

Gradient Checking

(Batch) Gradient Descent (Deep Learning Optimization Algorithm)

Gradient Descent Explained

Why Gradient descent might fail?

A Chat with Andrew on MLOps: From Model-centric to Data-centric AI

Big Data to Good Data: Andrew Ng Urges ML Community To Be More Data-Centric and Less Model-Centric

MLOps: Data-centric and Model-centric approaches

Critical Points

First-order Optimization Algorithm

Method of Steepest Descent

Second-Order Gradient Methods

Gradient Descent Explanation

Gradient Descent Variants

Notes about gradient descent

Suppose you have built a neural network. You decide to initialize the weights and biases to be zero. Which of the following statements is true?

Vanishing/exploding gradient

BERT Training Process

Objective Function

Distributed Training

The Problem with Constant Initialization

Objective Function Change Bounds in Gradient Descent

One-Dimensional Gradient Descent

Multivariate Gradient Descent

Second-Order Optimization Algorithm

Average Objective Function in Deep Learning

Accelerated Gradient Methods

Effect of Depth for Neural Networks

Vanishing/exploding gradient

Measuring the depth of the model

Example of Weight Initialization

Vanishing/exploding gradient

Symmetry Breaking in Deep Learning

Transfer Learning in Deep Learning

Multi-task Learning in Deep Learning

Variance of Layer Output in Forward Propagation

Default Random Initialization

Xavier Initialization

Built-in Gaussian Parameter Initialization

Constant Parameter Initialization

Block-Specific Parameter Initialization

Forced Parameter Reinitialization

Custom Parameter Initialization

Direct Parameter Assignment

Lazy Parameter Initialization

How to Initialize Weights to Prevent Vanishing/Exploding Gradients

Vanishing/exploding gradient

Local Minimum

Vanishing/exploding gradient

Saddle Point

Learn After

Solutions for vanishing/exploding gradient

A Gentle Introduction to Exploding Gradients in Neural Networks

Zero Weight Initialization in Feed-Forward Networks

Impact of Exploding Gradients on Model Training

Vanishing Gradient of the Tanh Activation Function

Reparametrization to Mitigate Stalling Optimization

Mathematical Mechanism of Vanishing and Exploding Gradients in Recurrent Neural Networks