Effect of Depth for Neural Networks

A feedforward network with a single layer is sufficient to representany function, but the layer may be infeasibly large and may fail to learn and generalize correctly. In many circumstances, using deeper models can reduce the number of units required to represent the desired function and can reduce the amount of generalization error.

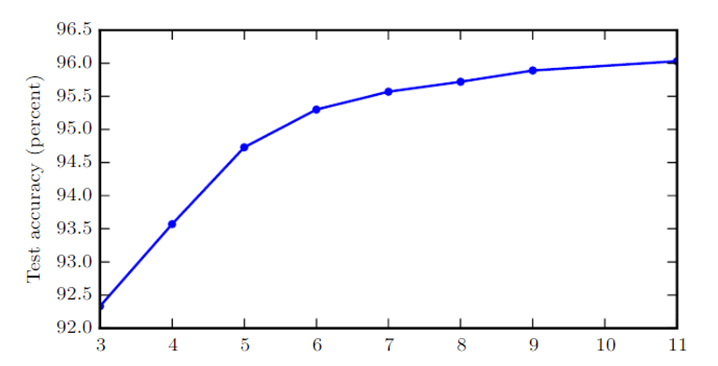

As is shown in the figure, empirical results show that deeper networks generalize better when used to transcribe multidigit numbers from photographs of addresses. Data from Goodfellow et al. (2014d). The test set accuracy consistently increases with increasing depth.

0

1

Tags

Data Science

Related

Effect of Depth for Neural Networks

Vanishing/exploding gradient

Measuring the depth of the model

Sparsity of Connections in Convolutional Neural Networks

Causal Inference

Smoothing Splines

Clustering, an unsupervised statistical learning method

Manifold learning algorithms

Effect of Depth for Neural Networks

Parameter Sharing

Temporal and Spatial Coherence

Simplicity of Factor Dependencies