Sparsity of Connections in Convolutional Neural Networks

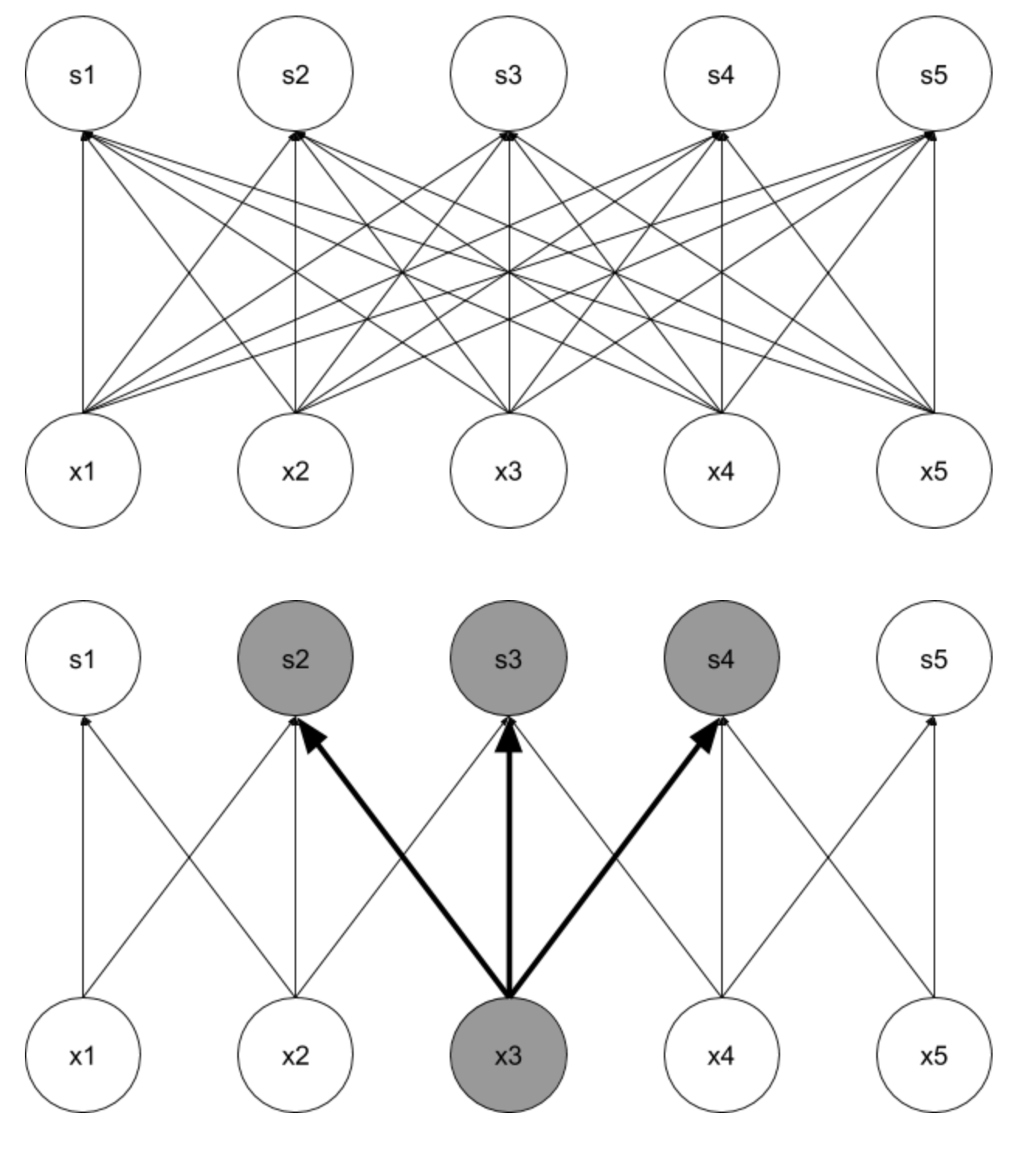

In a Feed-forward NN, we use the linear matrix multiplication for the forward propagation and each element in the output is dependent on each element in the input. Now imagine the input is a 500 x 500 image - including the three image channels, we have the input of 3 x 500 x 500 = 750,000 dimensions. Considering this number as only one side of the parameter matrix in the first layer, this means a lot of parameters in the first layer.

In contrast, in a convolution layer, each output value only depends on a small number of inputs, allowing us to significantly improve on efficiency, and decrease memory requirements. This way, we will be able to use very large input images.

0

2

Contributors are:

Who are from:

Tags

Data Science

Related

Parameter Sharing in Convolutional Neural Networks

Sparsity of Connections in Convolutional Neural Networks

Equivariance to Translation in Convolutional Neural Networks

Sparsity of Connections in Convolutional Neural Networks

Causal Inference

Smoothing Splines

Clustering, an unsupervised statistical learning method

Manifold learning algorithms

Effect of Depth for Neural Networks

Parameter Sharing

Temporal and Spatial Coherence

Simplicity of Factor Dependencies