Depth and Width for Neural Networks

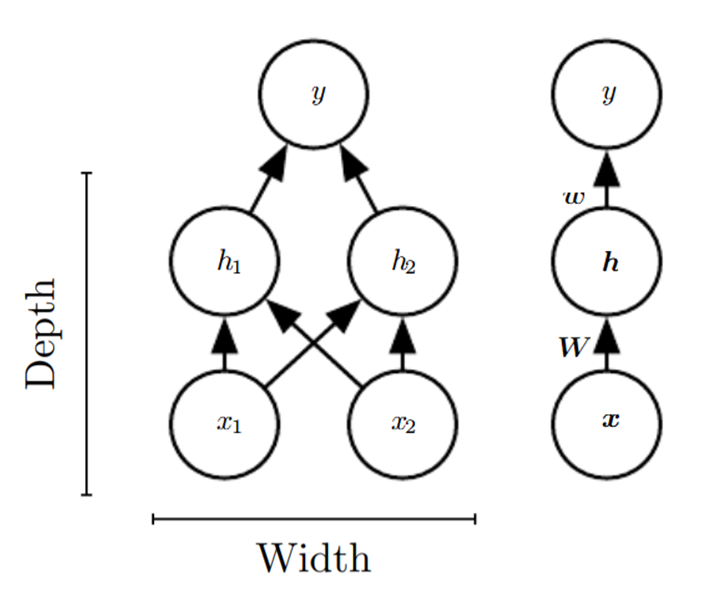

For a feedforward neural network, the depth of the network is the number of hidden layers plus one (as the output layer is also parameterized). The width of the network is the dimensionality of its hidden layers.

The main architectural considerations for designing a neural network are choosing the depth of the network and the width of each layer.

0

1

Tags

Data Science

Related

Depth and Width for Neural Networks

Layers of a Feed Forward Neural Network

Universal Approximation Theorem

Feedforward Neural Network Notation

Why deep representations?

An example of latent representations in deep networks

Depth and Width for Neural Networks

Example of how deeper layers of a neural network can learn more complicated functions

Depth and Width for Neural Networks

Dropout

Neural Network Learning Rate

Epochs in Machine Learning

Activation Functions in Neural Networks

Deep Learning Optimizer Algorithms

Deep Learning Weight Initialization

Hyperparameters Tuning Methods in Deep Learning

Difference between Model Parameter and Model Hyperparameter

Regularization Constant

Batch Normalization