Learn Before

Layers of a Feed Forward Neural Network

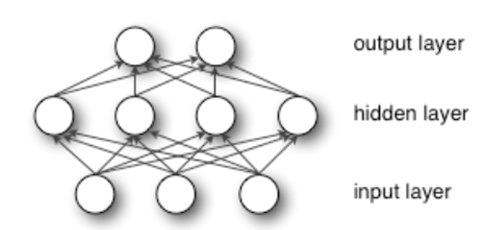

In the chain structure example , is called the first layer of the network, f^{(2)} is called the second layer, and so on. The final layer of a feedforward network is called the output layer.

During neural network training, we drive to match . The training data provides us with noisy, approximate examples of evaluated at different training points. Each example x is accompanied by a label .

The training examples specify directly that the output layer must produce a value that is close to at each point . However, the behavior of the other layers is not directly specified by the training data. The training data do not say what each individual layer should do. Instead, the learning algorithm must decide how to use these layers to best implement an approximation of . Because the training data does not show the desired output for each of these layers, they are called hidden layers.

0

2

Tags

Data Science