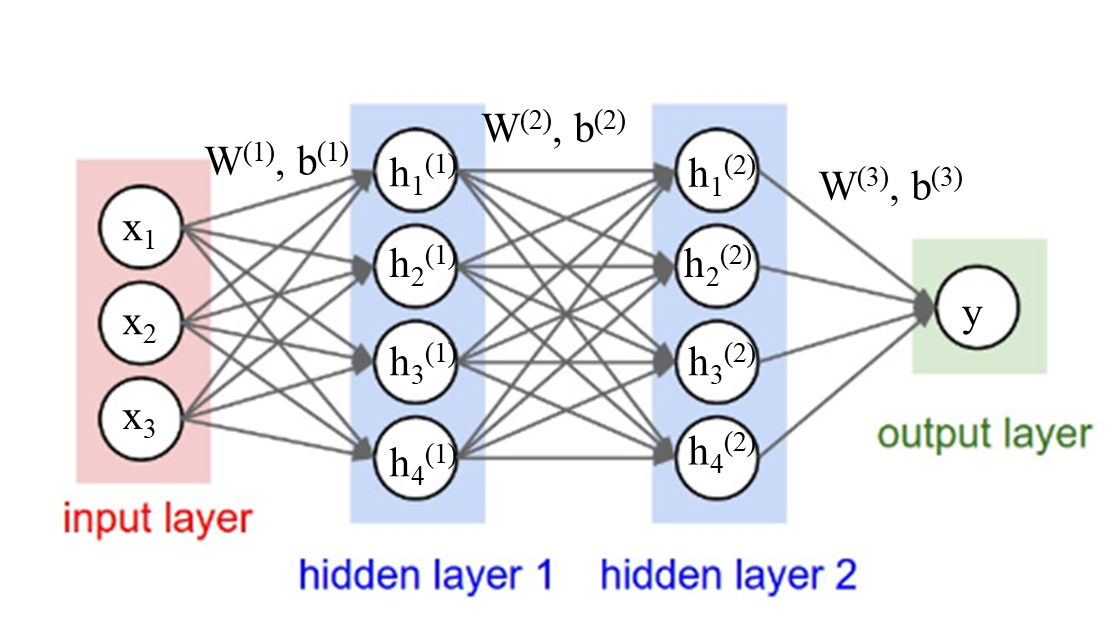

Connection between the Layers of Neural Network

In the chain structure of a feedforward network, Let's assume the input example is , then the first layer is given by the second layer is given by and so on. For the output layer,

where the matrix is the weight parameter for layer and is the activation function for layer . Whether transposing depends on the shape of the vector.

0

1

Contributors are:

Who are from:

Tags

Data Science

Related

Connection between the Layers of Neural Network

Forward Propagation Formulation

True/False: During forward propagation, in the forward function for a layer ll you need to know what is the activation function in a layer (Sigmoid, tanh, ReLU, etc.). During back propagation, the corresponding backward function also needs to know what is the activation function for layer ll, since the gradient depends on it.

Which of these is a correct vectorized implementation of forward propagation for layer , where 1≤≤?

- Z = WA + b

Connection between the Layers of Neural Network

Number of Layers in a Feed Forward Neural Network

Other hidden units